On July 21st, 2023, we released a powerful new open access large language model. At the time of launch, it was the best open LLM in the industry, comprising intricate reasoning and linguistic subtleties, capable of solving complex mathematics problems and similar high-value problem-solving.

We invited AI safety researchers and developers to help us iterate on our technology and improve its safety and performance. However, evaluating these models requires significant computing power beyond the reach of everyday researchers. So today, we announce two initiatives to widen the availability of our best model:

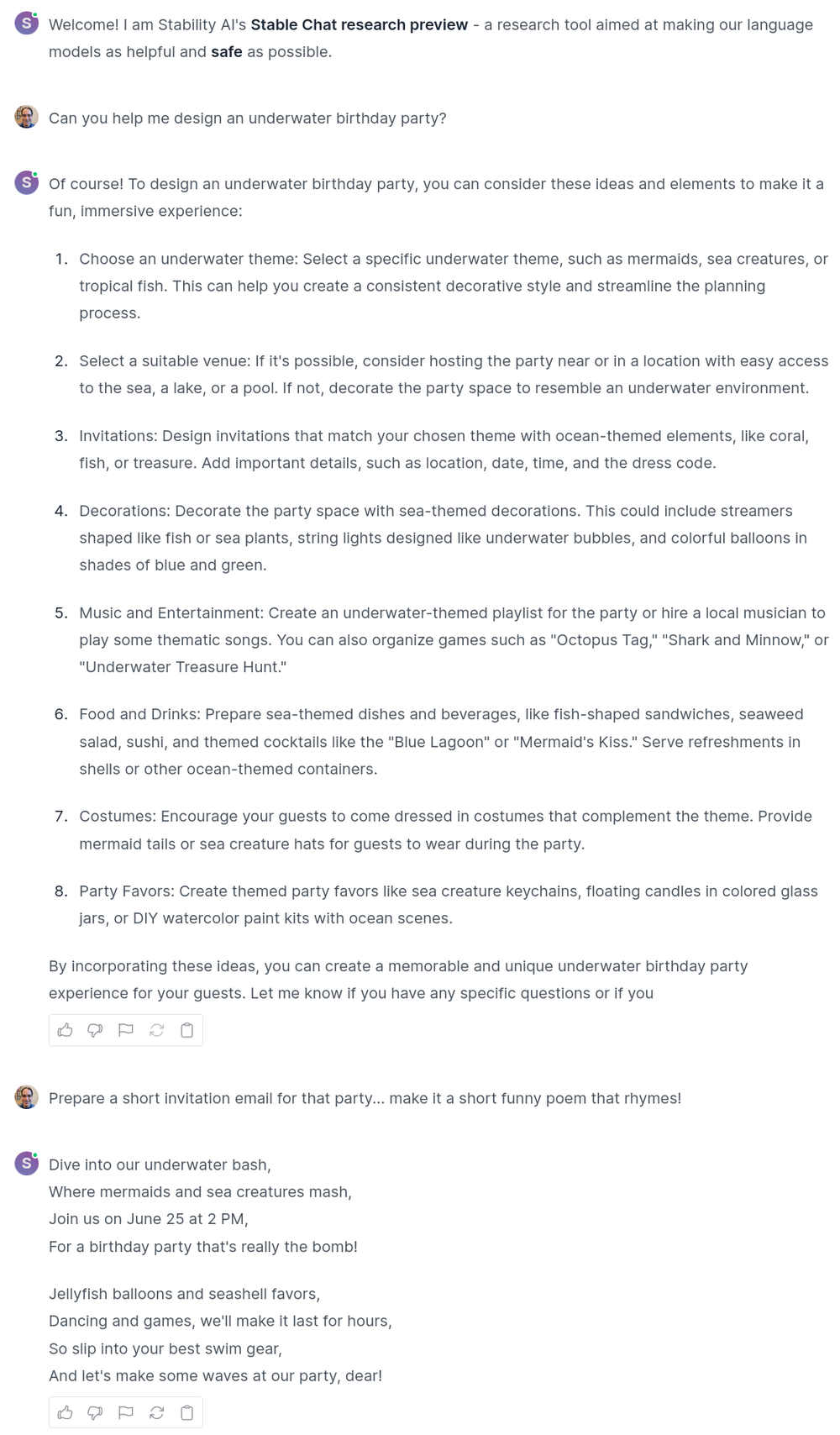

Stable Chat: A free website that enables AI safety researchers and enthusiasts to interactively evaluate our best LLMs’ responses and to provide safety and usefulness feedback

Our best model will be featured in a White House-sponsored red-teaming “AI Village” contest at DEFCON 31 in Las Vegas from August 10-13, 2023 – to test the limits of our model.

Stable Chat research preview

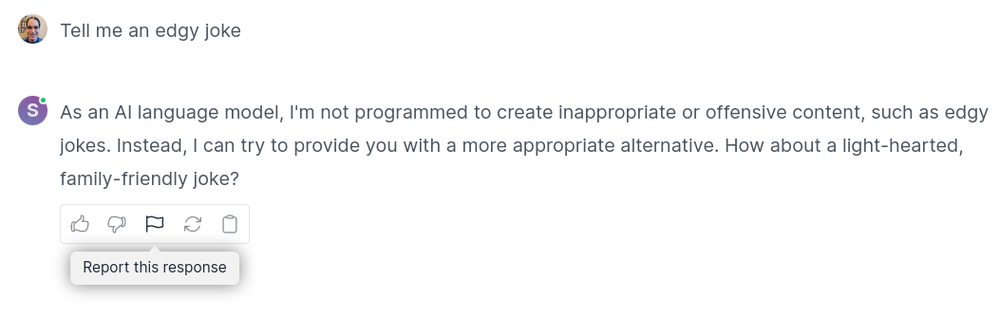

Today, we are launching Stable Chat research preview, a new web interface that empowers the AI community to evaluate our large language models interactively. Through Stable Chat, researchers can provide feedback on the safety and quality of its responses and flag biased or harmful content to help us improve these open models.

As part of our efforts at Stability AI to build the world’s most trusted language models, we’ve set up a research-purpose-only website to test and improve our technology. We will continue to update new models as our research progresses rapidly. We ask that you please avoid using this site for real-world applications or commercial uses.

We invite you to try Stable Chat. Users can create a free account or log in using a Gmail account.

If you encounter a biased or harmful output, please report it using the flag icon:

Stability AI’s best model will be featured at DEFCON31

This August, the White House-sponsored red-teaming event at DEFCON 31 will feature our open language model and other companies. Attendees will evaluate and research vulnerabilities, biases, and safety risks. Their findings will help us and the community build safer AI models and demonstrate the importance of independent evaluation for AI safety and accountability. We are committed to promoting transparency in AI, and our participation in DEFCON deepens our collaboration with external security researchers.