ML Observability: Bringing Transparency to Payments and Beyond

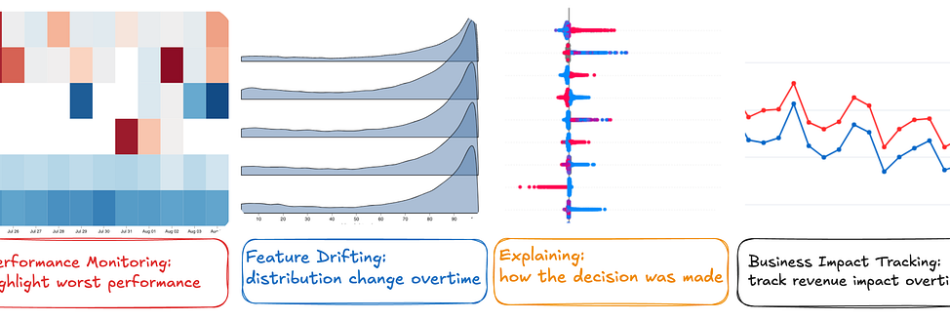

By Tanya Tang, Andrew Mehrmann At Netflix, the importance of ML observability cannot be overstated. ML observability refers to the ability to monitor, understand, and gain insights into the performance and behavior of machine learning models in production. It involves tracking key metrics, detecting anomalies, diagnosing issues, and ensuring models are operating reliably and as intended. …

Read more “ML Observability: Bringing Transparency to Payments and Beyond”