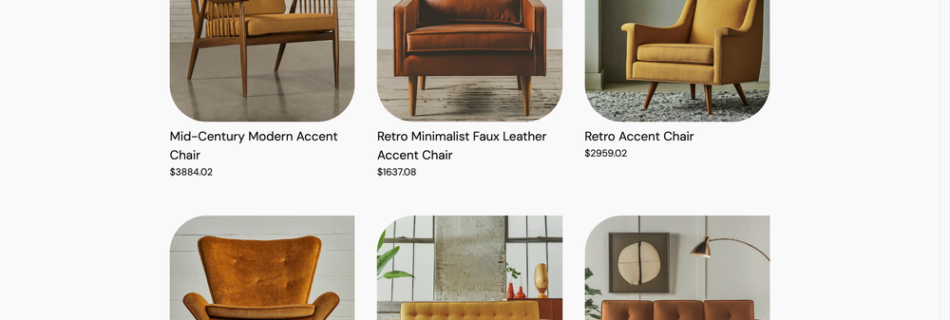

Test it out: an online shopping demo experience with Gemini and RAG

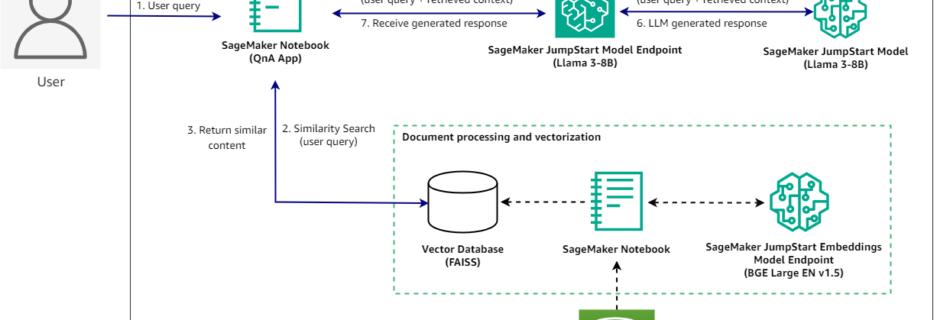

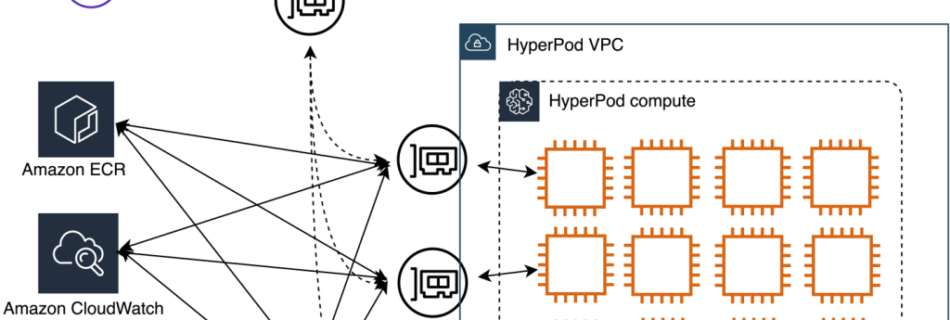

Earlier this year, tens of thousands of developers gathered in Las Vegas for Google Cloud Next ’24, which culminated in hundreds of sessions and over 200 announcements. During the Developer Keynote, we showcased how Gemini can help with the shopping experience of an online store. Let’s dive into this demo and how it all worked …

Read more “Test it out: an online shopping demo experience with Gemini and RAG”