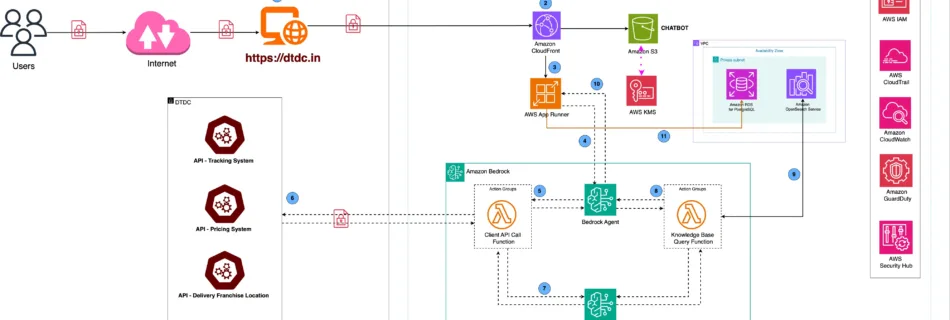

The DIVA logistics agent, powered by Amazon Bedrock

DTDC is India’s leading integrated express logistics provider, operating the largest network of customer access points in the country. DTDC’s technology-driven logistics solutions cater to a wide range of customers across diverse industry verticals, making them a trusted partner in delivering excellence. DTDC Express Limited receives over 400,000 customer queries each month, ranging from tracking …

Read more “The DIVA logistics agent, powered by Amazon Bedrock”