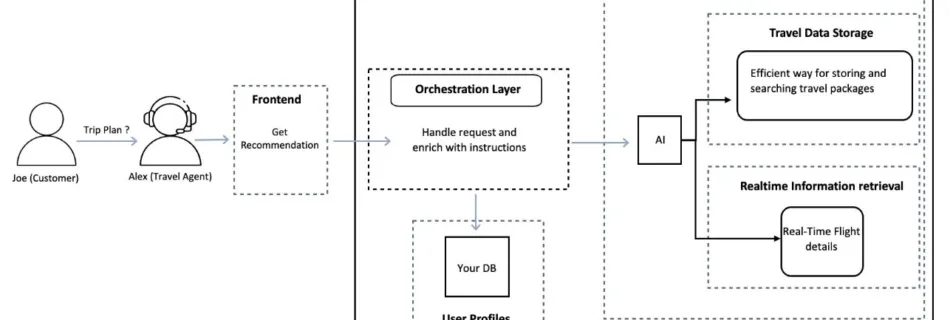

Build real-time travel recommendations using AI agents on Amazon Bedrock

Generative AI is transforming how businesses deliver personalized experiences across industries, including travel and hospitality. Travel agents are enhancing their services by offering personalized holiday packages, carefully curated for customer’s unique preferences, including accessibility needs, dietary restrictions, and activity interests. Meeting these expectations requires a solution that combines comprehensive travel knowledge with real-time pricing and …

Read more “Build real-time travel recommendations using AI agents on Amazon Bedrock”