Knowledge Bases for Amazon Bedrock now supports hybrid search

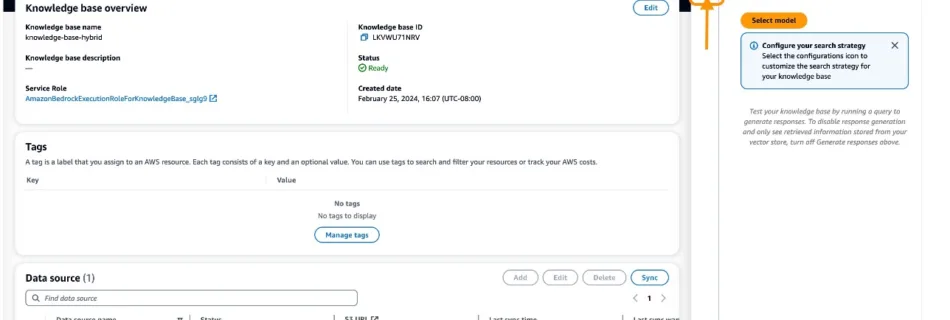

At AWS re:Invent 2023, we announced the general availability of Knowledge Bases for Amazon Bedrock. With a knowledge base, you can securely connect foundation models (FMs) in Amazon Bedrock to your company data for fully managed Retrieval Augmented Generation (RAG). In a previous post, we described how Knowledge Bases for Amazon Bedrock manages the end-to-end …

Read more “Knowledge Bases for Amazon Bedrock now supports hybrid search”