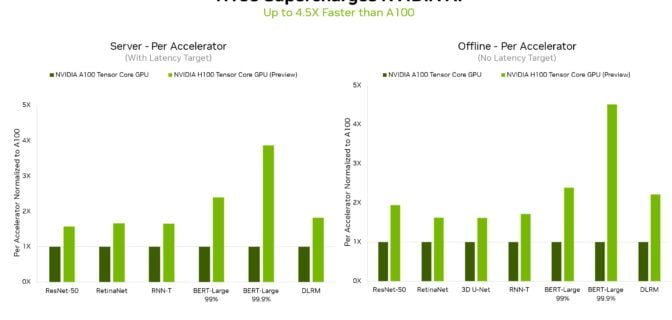

NVIDIA Hopper Sweeps AI Inference Benchmarks in MLPerf Debut

In their debut on the MLPerf industry-standard AI benchmarks, NVIDIA H100 Tensor Core GPUs set world records in inference on all workloads, delivering up to 4.5x more performance than previous-generation GPUs. The results demonstrate that Hopper is the premium choice for users who demand utmost performance on advanced AI models. Additionally, NVIDIA A100 Tensor Core …

Read more “NVIDIA Hopper Sweeps AI Inference Benchmarks in MLPerf Debut”