Reinforcement Learning for Long-Horizon Interactive LLM Agents

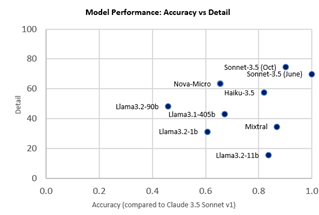

Interactive digital agents (IDAs) leverage APIs of stateful digital environments to perform tasks in response to user requests. While IDAs powered by instruction-tuned large language models (LLMs) can react to feedback from interface invocations in multi-step exchanges, they have not been trained in their respective digital environments. Prior methods accomplish less than half of tasks …

Read more “Reinforcement Learning for Long-Horizon Interactive LLM Agents”