Rethinking 5G: The cloud imperative

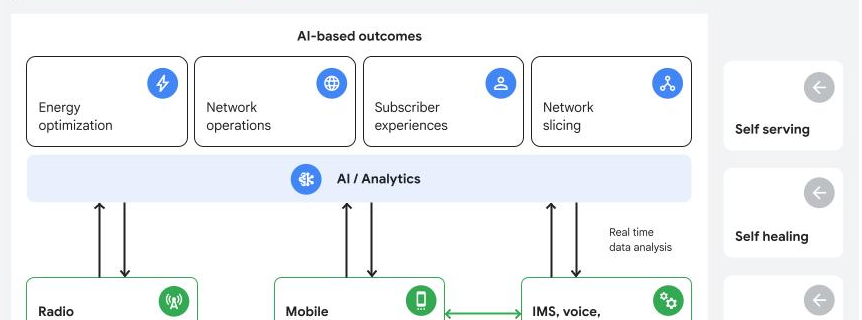

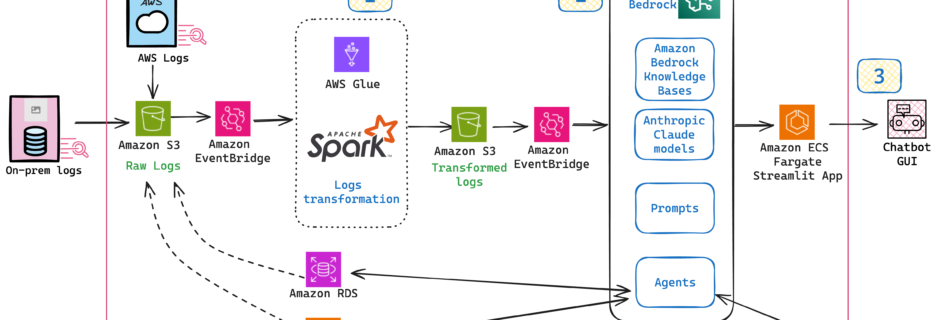

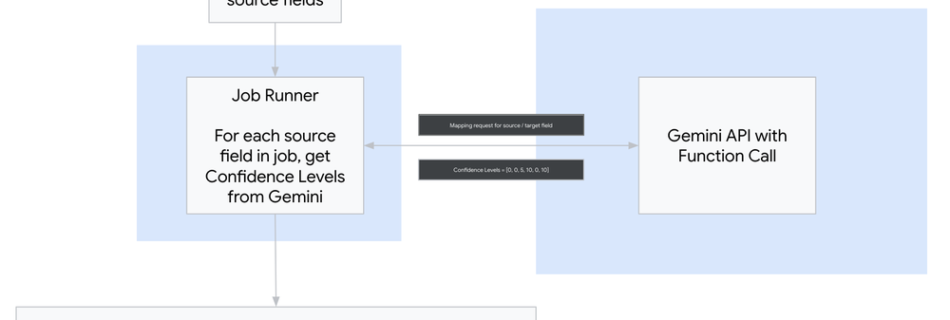

The telecommunications industry is at a critical juncture. The demands of 5G, the explosion of connected devices, and the ever-increasing complexity of network architectures require a fundamental shift in how networks are managed and operated. The future is autonomous — autonomous networks driving efficiency and innovation The future isn’t just about scale and performance; it’s …