Falcon 180B foundation model from TII is now available via Amazon SageMaker JumpStart

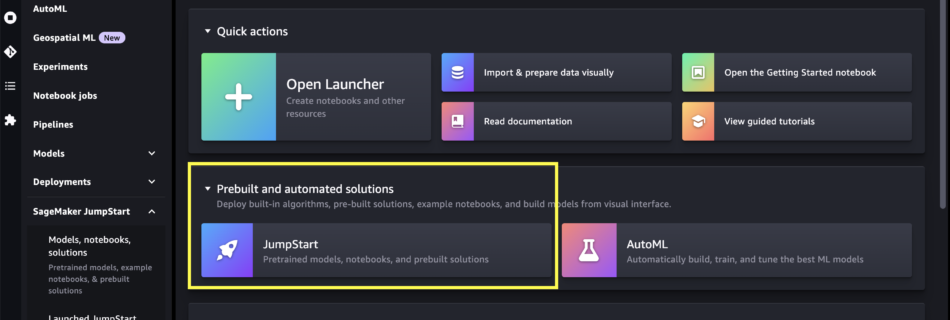

Today, we are excited to announce that the Falcon 180B foundation model developed by Technology Innovation Institute (TII) and trained on Amazon SageMaker is available for customers through Amazon SageMaker JumpStart to deploy with one-click for running inference. With a 180-billion-parameter size and trained on a massive 3.5-trillion-token dataset, Falcon 180B is the largest and …

Read more “Falcon 180B foundation model from TII is now available via Amazon SageMaker JumpStart”