Modern organizations increasingly depend on robust cloud infrastructure to provide business continuity and operational efficiency. Operational health events – including operational issues, software lifecycle notifications, and more – serve as critical inputs to cloud operations management. Inefficiencies in handling these events can lead to unplanned downtime, unnecessary costs, and revenue loss for organizations.

However, managing cloud operational events presents significant challenges, particularly in complex organizational structures. With a vast array of services and resource footprints spanning hundreds of accounts, organizations can face an overwhelming volume of operational events occurring daily, making manual administration impractical. Although traditional programmatic approaches offer automation capabilities, they often come with significant development and maintenance overhead, in addition to increasingly complex mapping rules and inflexible triage logic.

This post shows you how to create an AI-powered, event-driven operations assistant that automatically responds to operational events. It uses Amazon Bedrock, AWS Health, AWS Step Functions, and other AWS services. The assistant can filter out irrelevant events (based on your organization’s policies), recommend actions, create and manage issue tickets in integrated IT service management (ITSM) tools to track actions, and query knowledge bases for insights related to operational events. By orchestrating a group of AI endpoints, the agentic AI design of this solution enables the automation of complex tasks, streamlining the remediation processes for cloud operational events. This approach helps organizations overcome the challenges of managing the volume of operational events in complex, cloud-driven environments with minimal human supervision, ultimately improving business continuity and operational efficiency.

Event-driven operations management

Operational events refer to occurrences within your organization’s cloud environment that might impact the performance, resilience, security, or cost of your workloads. Some examples of AWS-sourced operational events include:

- AWS Health events — Notifications related to AWS service availability, operational issues, or scheduled maintenance that might affect your AWS resources.

- AWS Security Hub findings — Alerts about potential security vulnerabilities or misconfigurations identified within your AWS environment.

- AWS Cost Anomaly Detection alerts – Notifications about unusual spending patterns or cost spikes.

- AWS Trusted Advisor findings — Opportunities for optimizing your AWS resources, improving security, and reducing costs.

However, operational events aren’t limited to AWS-sourced events. They can also originate from your own workloads or on-premises environments. In principle, any event that can integrate with your operations management and is of importance to your workload health qualifies as an operational event.

Operational event management is a comprehensive process that provides efficient handling of events from start to finish. It involves notification, triage, progress tracking, action, and archiving and reporting at a large scale. The following is a breakdown of the typical tasks included in each step:

- Notification of events:

- Format notifications in a standardized, user-friendly way.

- Dispatch notifications through instant messaging tools or emails.

- Triage of events:

- Filter out irrelevant or noise events based on predefined company policies.

- Analyze the events’ impact by examining their metadata and textual description.

- Convert events into actionable tasks and assigning responsible owners based on roles and responsibilities.

- Log tickets or page the appropriate personnel in the chosen ITSM tools.

- Status tracking of events and actions:

- Group related events into threads for straightforward management.

- Update ticket statuses based on the progress of event threads and action owner updates.

- Insights and reporting:

- Query and consolidate knowledge across various event sources and tickets.

- Create business intelligence (BI) dashboards for visual representation and analysis of event data.

A streamlined process should include steps to ensure that events are promptly detected, prioritized, acted upon, and documented for future reference and compliance purposes, enabling efficient operational event management at scale. However, traditional programmatic automation has limitations when handling multiple tasks. For instance, programmatic rules for event attribute-based noise filtering lack flexibility when faced with organizational changes, expansion of the service footprint, or new data source formats, leading growing complexity.

Automating impact analysis in traditional automation through keyword matching on free-text descriptions is impractical. Converting events to tickets requires manual effort to generate action hints and lacks correlation to the originating events. Extracting event storylines from long, complex threads of event updates is challenging.

Let’s explore an AI-based solution to see how it can help address these challenges and improve productivity.

Solution overview

The solution uses AWS Health and AWS Security Hub findings as sources of operational events to demonstrate the workflow. It can be extended to incorporate additional types of operational events—from AWS or non-AWS sources—by following an event-driven architecture (EDA) approach.

The solution is designed to be fully serverless on AWS and can be deployed as infrastructure as code (IaC) by usingf the AWS Cloud Development Kit (AWS CDK).

Slack is used as the primary UI, but you can implement the solution using other messaging tools such as Microsoft Teams.

The cost of running and hosting the solution depends on the actual consumption of queries and the size of the vector store and the Amazon Kendra document libraries. See Amazon Bedrock pricing, Amazon OpenSearch pricing and Amazon Kendra pricing for pricing details.

The full code repository is available in the accompanying GitHub repo.

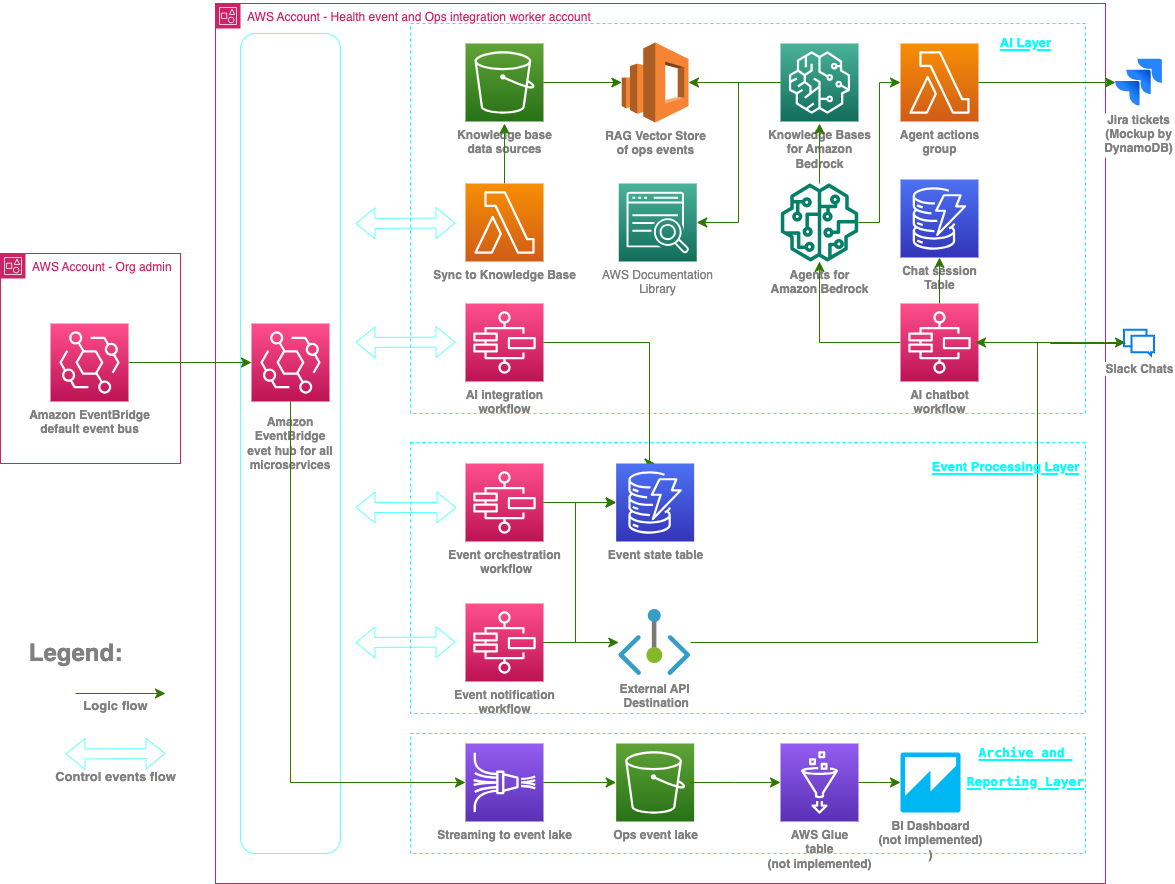

The following diagram illustrates the solution architecture.

Figure – solution architecture diagram

Solution walk-through

The solution consists of three microservice layers, which we discuss in the following sections.

Event processing layer

The event processing layer manages notifications, acknowledgments, and triage of actions. Its main logic is controlled by two key workflows implemented using Step Functions.

- Event orchestration workflow – This workflow is subscribed to and invoked by operational events delivered to the main Amazon EventBridge hub. It sends HealthEventAdded or SecHubEventAdded events back to the main event hub following the workflow in the following figure.

Figure – Event orchestration workflow

- Event notification workflow – This workflow formats notifications that are exchanged between Slack chat and backend microservices. It listens to control events such as HealthEventAdded and SecHubEventAdded.

Figure – Event notification workflow

AI layer

The AI layer handles the interactions between Agents for Amazon Bedrock, Knowledge Bases for Amazon Bedrock, and the UI (Slack chat). It has several key components.

OpsAgent is an operations assistant powered by Anthropic Claude 3 Haiku on Amazon Bedrock. It reacts to operational events based on the event type and text descriptions. OpsAgent is supported by two other AI model endpoints on Amazon Bedrock with different knowledge domains. An action group is defined and attached to OpsAgent, allowing it to solve more complex problems by orchestrating the work of AI endpoints and taking actions such as creating tickets without human supervisions.

OpsAgent is pre-prompted with required company policies and guidelines to perform event filtering, triage, and ITSM actions based on your requirements. See the sample escalation policy in the GitHub repo (between escalation_runbook tags).

OpsAgent uses two supporting AI model endpoints:

- The events expert endpoint uses the Amazon Titan in Amazon Bedrock foundation model (FM) and Amazon OpenSearch Serverless to answer questions about operational events using Retrieval Augmented Generation (RAG).

- The ask-aws endpoint uses the Amazon Titan model and Amazon Kendra as the RAG source. It contains the latest AWS documentation on selected topics. You must syncronize the Amazon Kendra data sources to ensure the underlying AI model is using the latest documentation. Your can do this using the AWS Management Console after the solution is deployed.

These dedicated endpoints with specialized RAG data sources help break down complex tasks, improve accuracy, and make sure the correct model is used.

The AI layer also includes of two AI orchestration Step Functions workflows. The workflows manage the AI agent, AI model endpoints, and the interaction with the user (through Slack chat):

- The AI integration workflow defines how the operations assistant reacts to operational events based on the event type and the text descriptions of those events. The following figure illustrates the workflow.

Figure – AI integration workflow

- The AI chatbot workflow manages the interaction between users and the OpsAgent assistant through a chat interface. The chatbot handles chat sessions and context.

Figure: AI chatbot workflow

Archiving and reporting layer

The archiving and reporting layer handles streaming, storing, and extracting, transforming, and loading (ETL) operational event data. It also prepares a data lake for BI dashboards and reporting analysis. However, this solution doesn’t include an actual dashboard implementation; it prepares an operational event data lake for later development.

Use case examples

You can use this solution for automated event notification, autonomous event acknowledgement, and action triage by setting up a virtual supervisor or operator that follows your organization’s policies. The virtual operator is equipped with multiple AI capabilities—each of which is specialized in a specific knowledge domain—such as generating recommended actions or taking actions to issue tickets in ITSM tools, as shown in the following figure.

Figure – use case example 1

The virtual event supervisor filters out noise based on your policies, as illustrated in the following figure.

Figure – use case example 2

AI can use the tickets that are related to a specific AWS Health event to provide the latest status updates on those tickets, as shown in the following figure.

Figure – use case example 3

The following figure shows how the assistant evaluates complex threads of operational events to provide valuable insights.

Figure – use case example 4

The following figure shows a more sophisticated use case.

Figure – use case example 5

Prerequisites

To deploy this solution, you must meet the following prerequisites:

- Have at least one AWS account with permissions to create and manage the necessary resources and components for the application. If you don’t have an AWS account, see How do I create and activate a new Amazon Web Services account?. The project uses a typical setup of two accounts, where one is the organization’s health administrator account and the other is the worker account hosting backend microservices. The worker account can be the same as the administrator account if you choose to use a single account setup.

- Make sure you have access to Amazon Bedrock FMs in your preferred AWS Region in the worker account. The FMs used in the post are Anthropic Claude 3 Haiku, and Amazon Titan Text G1 – Premier.

- Enable the AWS Health Organization view and delegate an administrator account in your AWS management account if you want to manage AWS Health events across your entire organization. Enabling AWS Health Organization view is optional if you only need to source operational events from a single account. Delegation of a separate administrator account for AWS Health is also optional if you want to manage all operational events from your AWS management account.

- Enable AWS Security Hub in your AWS management account. Optionally, enable Security Hub with Organizations integration if you want to monitor security findings for the entire organization instead of just a single account.

- Have a Slack workspace with permissions to configure a Slack app and set up a channel.

- Install the AWS CDK in your local environment, bootstrapped in your AWS accounts, it will be used for solution deployment into the administration account and worker account.

- Have AWS Serverless Application Model (AWS SAM) and Docker installed in your development environment to build AWS Lambda packages

Create a Slack app and set up a channel

Set up Slack:

- Create a Slack app from the manifest template, using the content of the slack-app-manifest.json file from the GitHub repository.

- Install your app into your workspace, and take note of the Bot User OAuth Token value to be used in later steps.

- Take note of the Verification Token value under Basic Information of your app, you will need it in later steps.

- In your Slack desktop app, go to your workspace and add the newly created app.

- Create a Slack channel and add the newly created app as an integrated app to the channel.

- Find and take note of the channel ID by choosing (right-clicking) the channel name, choosing Additional options to access the More menu, and choosing Open details to see the channel details.

Prepare your deployment environment

Use the following commands to ready your deployment environment for the worker account. Make sure you aren’t running the command under an existing AWS CDK project root directory. This step is required only if you chose a worker account that’s different from the administration account:

Use the following commands to ready your deployment environment for the administration account. Make sure you aren’t running the commands under an existing AWS CDK project root directory:

Copy the GitHub repo to your local directory

Use the following code to copy the GitHub repo to your local directory.:

Create an .env file

Create an .env file containing the following code under the project root directory. Replace the variable placeholders with your account information:

Deploy the solution using the AWS CDK

Deploy the processing microservice to your worker account (the worker account can be the same as your administrator account):

- In the project root directory, run the following command:

cdk deploy --all --require-approval never - Capture the HandleSlackCommApiUrl stack output URL,

- Go to your Slack app and navigate to Event Subscriptions, Request URL Change,

- Update the URL value with the stack output URL and save your settings.

Test the solution

Test the solution by sending a mock operational event to your administration account . Run the following AWS Command Line Interface (AWS CLI) command:

aws events put-events --entries file://test-events/mockup-events.json

You will receive Slack messages notifying you about the mock event followed by automatic update from the AI assistant reporting the actions it took and the reasons for each action. You don’t need to manually choose Accept or Discharge for each event.

Try creating more mock events based on your past operational events and test them with the use cases described in the Use case examples section.

If you have just enabled AWS Security Hub in your administrator account, you might need to wait for up to 24 hours for any findings to be reported and acted on by the solution. AWS Health events, on the other hand, will be reported whenever applicable.

Clean up

To clean up your resources, run the following command in the CDK project directory: cdk destroy --all

Conclusion

This solution uses AI to help you automate complex tasks in cloud operational events management, bringing new opportunities for you to further streamline cloud operations management at scale with improved productivity, and operational resilience.

To learn more about the AWS services used in this solution, see:

About the author

Sean Xiaohai Wang is a Senior Technical Account Manager at Amazon Web Services. He helps enterpise customers build and operate efficiently on AWS.

Sean Xiaohai Wang is a Senior Technical Account Manager at Amazon Web Services. He helps enterpise customers build and operate efficiently on AWS.