We recently introduced a new capability in the Amazon SageMaker Python SDK that lets data scientists run their machine learning (ML) code authored in their preferred integrated developer environment (IDE) and notebooks along with the associated runtime dependencies as Amazon SageMaker training jobs with minimal code changes to the experimentation done locally. Data scientists typically carry out several iterations of experimentation in data processing and training models while working on any ML problem. They want to run this ML code and carry out the experimentation with ease of use and minimal code change. Amazon SageMaker Model Training helps data scientists run fully managed large-scale training jobs on AWS’s compute infrastructure. SageMaker Training also helps data scientists with advanced tools such as Amazon SageMaker Debugger and Profiler to debug and analyze their large-scale training jobs.

For customers with small budgets, small teams, and tight timelines, every single new concept and line of code rewritten to run on SageMaker makes them less productive towards their core tasks, namely data processing and training ML models. They want to write code once in the framework of their choice and be able to move seamlessly from running code in their notebooks or laptops to running code at scale using SageMaker capabilities.

With this new capability of the SageMaker Python SDK, data scientists can onboard their ML code to the SageMaker Training platform in a few minutes. You just need to add a single line of code to your ML code, and SageMaker intelligently comprehends your code along with the datasets and workspace environment setup and runs it as a SageMaker Training job. You can then take advantage of the key capabilities of the SageMaker Training platform, like the ability to scale jobs easily, and other associated tools like Debugger and Profiler. In this release, you can run your local machine learning (ML) Python code as a single-node Amazon SageMaker training job or multiple parallel jobs. Distributed training jobs(across multiple nodes) are not supported by remote functions.

In this post, we show you how to use this new capability to run local ML code as a SageMaker Training job.

Solution overview

You can now run your ML code written in your IDE or notebook as a SageMaker Training job by annotating the function, which acts as an entry point to the user’s code base, with a simple decorator. Upon invocation, this capability automatically takes a snapshot of all the associated variables, functions, packages, environment variables, and other runtime requirements from your ML code, serializes them, and submits them as a SageMaker Training job. It integrates with the recently announced SageMaker Python SDK feature for setting default values for parameters. This capability simplifies the SageMaker constructs that you need to learn to be able to run code using SageMaker Training. Data scientists can write, debug, and iterate their code in any preferred IDE (such as Amazon SageMaker Studio, notebooks, VS Code, or PyCharm). When ready, you can annotate your Python function with the @remote decorator and run it as a SageMaker job at scale.

This capability takes familiar open-source Python objects as arguments and outputs. Furthermore, you don’t need to understand container lifecycle management and can simply run your workloads across different compute contexts (such as a local IDE, Studio, or training jobs) with minimal configuration overheads. To run any local code as a SageMaker Training job, this capability infers the configurations required to run jobs, such as the AWS Identity and Access Management (IAM) role, encryption key, and network configuration, from the Studio or IDE settings (which can be the default settings) and passes them to the platform by default. You have the flexibility to customize your runtime in the SageMaker managed infrastructure using the inferred configuration or override them at the SDK-level by passing them as arguments to the decorator.

This new capability of the SageMaker Python SDK transforms your ML code in an existing workspace environment and any associated data processing code and datasets into a SageMaker Training job. This capability looks for ML code wrapped inside a @remote decorator and automatically translates it into a job that runs in either Studio or a local IDE such as PyCharm.

In the following sections, we walk through the features of this new capability and how to launch python functions as SageMaker Training jobs.

Prerequisites

To use this new SageMaker Python SDK capability and run the code associated with this post, you need the following prerequisites:

- An AWS account that will contain all your AWS resources

- An IAM role to access SageMaker

- Access to Studio or a SageMaker notebook instance or an IDE such as PyCharm

Use the SDK from Studio and SageMaker notebooks

You can use this capability from Studio by launching a notebook and wrapping your code with a @remote decorator inside the notebook. You first need to import the remote function using the following code:

from sagemaker.remote_function import remoteWhen you use the decorator function, this capability will automatically interpret the function of your code and run it as a SageMaker Training job.

You can also use this capability from a SageMaker notebook instance. You first need to start a notebook instance, open Jupyter or Jupyter Lab on it, and launch a notebook. Then import the remote function as shown in the preceding code and wrap your code with the @remote decorator. We include an example of how to use the decorator function and the associated settings later in this post.

Use the SDK from your local environment

You can also use this capability from your local IDE. As a prerequisite, you must have the AWS Command Line Interface (AWS CLI), SageMaker Python SDK, and AWS SDK for Python (Boto3) installed in your local environment. You need to import these libraries in your code, set the SageMaker session, specify settings, and decorate your function with the @remote decorator. In the following example code, we run a simple divide function as a SageMaker Training job:

import boto3

import sagemaker

from sagemaker.remote_function import remote

sm_session = sagemaker.Session(boto_session=boto3.session.Session(region_name="us-west-2"))

settings = dict(

sagemaker_session=sm_session,

role=<IAM_ROLE_NAME>

instance_type="ml.m5.xlarge",

)

@remote(**settings)

def divide(x, y):

return x / y

if __name__ == "__main__":

print(divide(2, 3.0))We can use a similar methodology to run advanced functions as training jobs, as shown in the next section.

Launch Python functions as SageMaker jobs

The new SageMaker Python SDK feature allows you to run Python functions as SageMaker Training jobs. Any Python code, ML training code developed by data scientists using their preferred local IDEs (PyCharm, VS Code), SageMaker notebooks, or Studio notebooks can be launched as a managed SageMaker job.

In ML workloads using this capability, associated datasets, dependencies, and workspace environment setups are serialized using the ML code and run as a SageMaker job synchronously and asynchronously.

You can add a @remote decorator annotation to any Python code including a local ML processing or training function to launch it as a managed SageMaker Training job, thereby taking advantage of the scale, performance, and cost benefits of SageMaker. This can be achieved with minimal code changes by adding a decorator to the Python function code. Invocation to the decorated function is run synchronously, and the function run waits until the SageMaker job is complete.

In the following example, we use the @remote decorator to launch SageMaker jobs in decorator mode using an ml.m5.large instance. SageMaker uses training jobs to launch this function as a managed job.

from sagemaker.remote_function import remote

from numpy as np

@remote(instance_type="ml.m5.large")

def matrix_multiply(a, b):

return np.matmul(a, b)

a = np.array([[1, 0], [0, 1]])

b = np.array([1, 2])

assert matrix_multiply(a, b) == np.array([1,2])You can also use decorator mode to launch SageMaker jobs, Python packages, and dependencies. You can include environment variables such as VPC, subnets, and security groups to launch SageMaker training jobs in the environment.yml file. This allows ML engineers and admins to configure these environment variables so data scientists can focus on ML model building and iterate faster. See the following code:

from sagemaker.remote_function import remote

@remote(instance_type="ml.g4dn.xlarge",dependencies = "./environment.yml")

def train_hf_model(

train_input_path,test_input_path,s3_output_path = None,

*,epochs = 1, train_batch_size = 32, eval_batch_size = 64,

warmup_steps = 500,learning_rate = 5e-5

):

model_name = "distilbert-base-uncased"

model = AutoModelForSequenceClassification.from_pretrained(model_name)

... <TRUCNATED>

return os.path.join(s3_output_path, model_dir), eval_resultYou can use RemoteExecutor to launch Python functions as SageMaker jobs asynchronously. The executor asynchronously polls SageMaker Training jobs to update the status of the job. The RemoteExecutor class is an implementation of the concurrent.futures.Executor, which is used to submit SageMaker Training jobs asynchronously. See the following code:

from sagemaker.remote_function import RemoteExecutor

def train_hf_model(

train_input_path,test_input_path,s3_output_path = None,

*,epochs = 1, train_batch_size = 32, eval_batch_size = 64,

warmup_steps = 500,learning_rate = 5e-5

):

model_name = "distilbert-base-uncased"

model = AutoModelForSequenceClassification.from_pretrained(model_name)

...<TRUNCATED>

return os.path.join(s3_output_path, model_dir), eval_result

with RemoteExecutor(instance_type="ml.g4dn.xlarge", dependencies = './requirements.txt') as e:

future = e.submit(divide, train_input_path,test_input_path,s3_output_path,

epochs, train_batch_size, eval_batch_size,warmup_steps,learning_rate)Customize the runtime environment

Decorator mode and RemoteExecutor allow you to define and customize the runtime environments for the SageMaker job. The runtime dependencies, including Python packages and environment variables for SageMaker jobs, can be specified to customize the runtime. In order to run local Python code as SageMaker managed jobs, the Python package and dependencies need to be made available to SageMaker. ML engineers or data science administrators can configure networking and security configurations such as VPC, subnets, and security groups for SageMaker jobs, so data scientists can use these centrally managed configurations while launching SageMaker jobs. You can use either a requirements.txt file or a Conda environment.yaml file.

When dependencies are defined with requirements.txt, the packages will be installed using pip in the job runtime. If the image used for running the job comes with Conda environments, packages will be installed in the Conda environment declared to use for jobs. The following code shows an example requirements.txt file:

datasets

transformers

torch

scikit-learn

s3fs==0.4.2

sagemaker>=2.148.0You can pass your Conda environment.yaml file to create the Conda environment you would like your code to run in during the training job. If the image used for running the job declares a Conda environment to run the code under, we will update the declared Conda environment with the given specification. The following code is an example of a Conda environment.yaml file:

name: sagemaker_example

channels:

- conda-forge

dependencies:

- python=3.10

- pandas

- pip:

- sagemakerAlternatively, you can set dependencies=”auto_capture” to let the SageMaker Python SDK capture the installed dependencies in the active Conda environment. You must have an active Conda environment for auto_capture to work. Note that there are prerequisites for auto_capture to work; we recommend that you pass in your dependencies as a requirement.txt or Conda environment.yml file as described in the previous section.

For more details, refer to Run your local code as a SageMaker Training job.

Configurations for SageMaker jobs

Infrastructure-related settings can be offloaded to a configuration file that admin users could help set up. You only need to set it up one time. Infrastructure settings cover the network configuration, IAM roles, Amazon Simple Storage Service (Amazon S3) folder for input, output data, and tags. Refer to Configuring and using defaults with the SageMaker Python SDK for more details.

SchemaVersion: '1.0'

SageMaker:

PythonSDK:

Modules:

RemoteFunction:

Dependencies: path/to/requirements.txt

EnvironmentVariables: {"EnvVarKey": "EnvVarValue"}

ImageUri: 366666666666.dkr.ecr.us-west-2.amazonaws.com/my-image:latest

InstanceType: ml.m5.large

RoleArn: arn:aws:iam::366666666666:role/MyRole

S3KmsKeyId: somekmskeyid

S3RootUri: s3://my-bucket/my-project

SecurityGroupIds:

- sg123

Subnets:

- subnet-1234

Tags:

- {"Key": "someTagKey", "Value": "someTagValue"}

VolumeKmsKeyId: somekmskeyidImplementation

Deep learning models like PyTorch or TensorFlow can also be run within Studio by running the code as a training job within the notebook. To showcase this capability in Studio, you can clone this repo into your Studio and run the notebook located in the GitHub repository.

This example demonstrates an end-to-end binary text classification use case. We are using the Hugging Face transformers and datasets library to fine-tune a pre-trained transformer on binary text classification. In particular, the pre-trained model will be fine-tuned using the IMDb dataset.

When you clone the repository, you should locate the following files:

- config.yaml – Most of the decorator arguments can be offloaded to the configuration file in order to separate out the infrastructure-related settings from the code base

- huggingface.ipynb – This contains the code to train a pre-trained HuggingFace model, which will be fine-tuned using the IMDB dataset

- requirements.txt – This file contains all the dependencies to run the function that will be used in this notebook for running the code and running the training remotely in a GPU instance as a training job

When you open the notebook, you will be prompted to set up the notebook environment. You can select the Data Science 3.0 image with the Python 3 kernel and ml.m5.large as the fast launch instance type for running the notebook code. This instance type is significantly faster in spinning up an environment.

The training job will be run in an ml.g4dn.xlarge instance as defined in the config.yaml file:

SchemaVersion: '1.0'

SageMaker:

PythonSDK:

Modules:

RemoteFunction:

# role arn is not required if in SageMaker Notebook instance or SageMaker Studio

# Uncomment the following line and replace with the right execution role if in a local IDE

# RoleArn: <IAM_ROLE_ARN>

InstanceType: ml.g4dn.xlarge

Dependencies: ./requirements.txtThe requirements.txt file dependencies to run the function for training the Hugging Face model include the following:

datasets

transformers

torch

scikit-learn

# lock s3fs to this specific version as more recent ones introduce dependency on aiobotocore, which is not compatible with botocore

s3fs==0.4.2

sagemaker>=2.148.0,<3The Hugging Face notebook showcases how to run the training remotely via the @remote function, which is run synchronously. Therefore, the function run for training the model will wait until the SageMaker Training job is complete. The training will be run remotely with a GPU instance wherein the instance type is defined in the preceding configuration file.

After you run the training job, you can run the rest of the cells in the notebook to inspect the evaluation metrics and classify the text on our trained model.

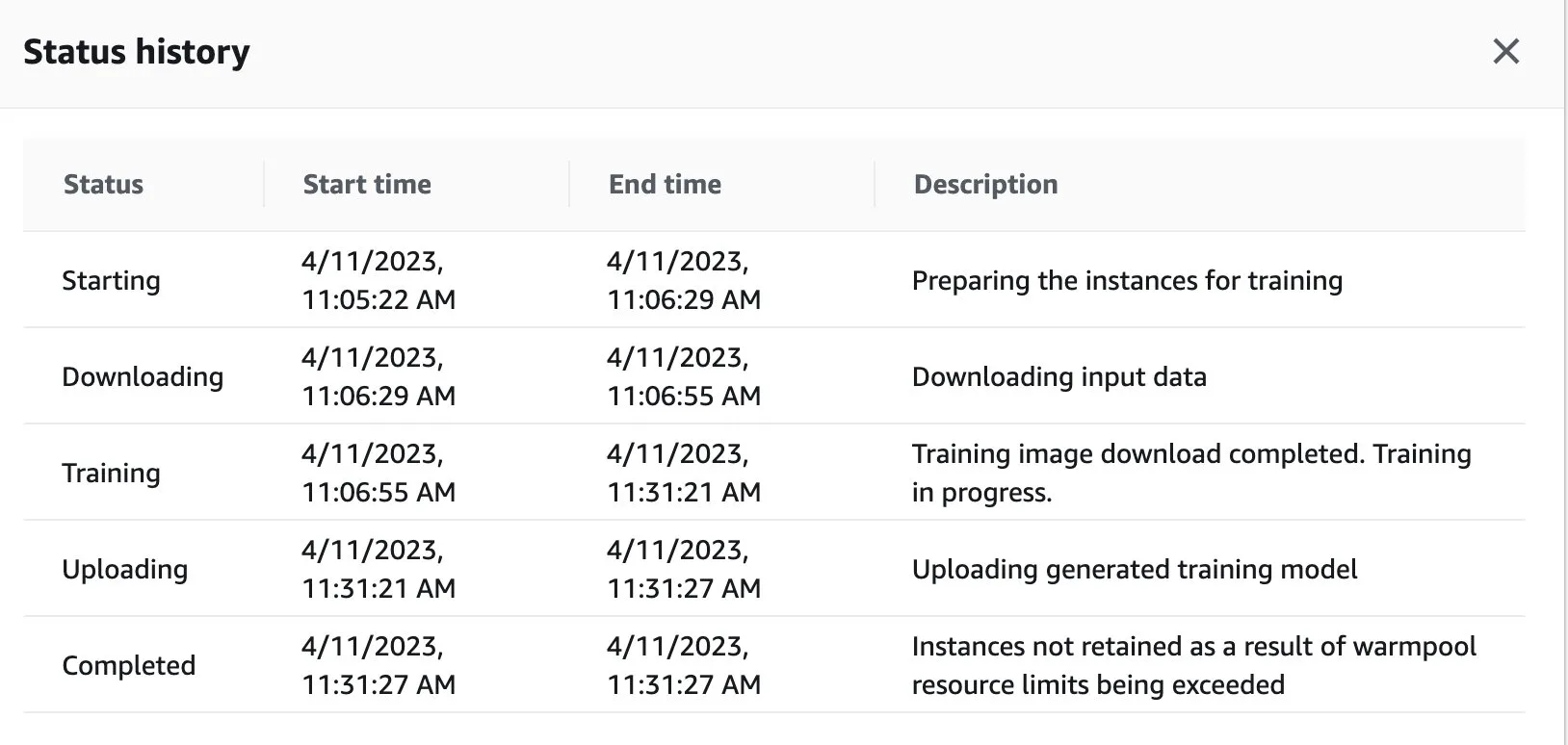

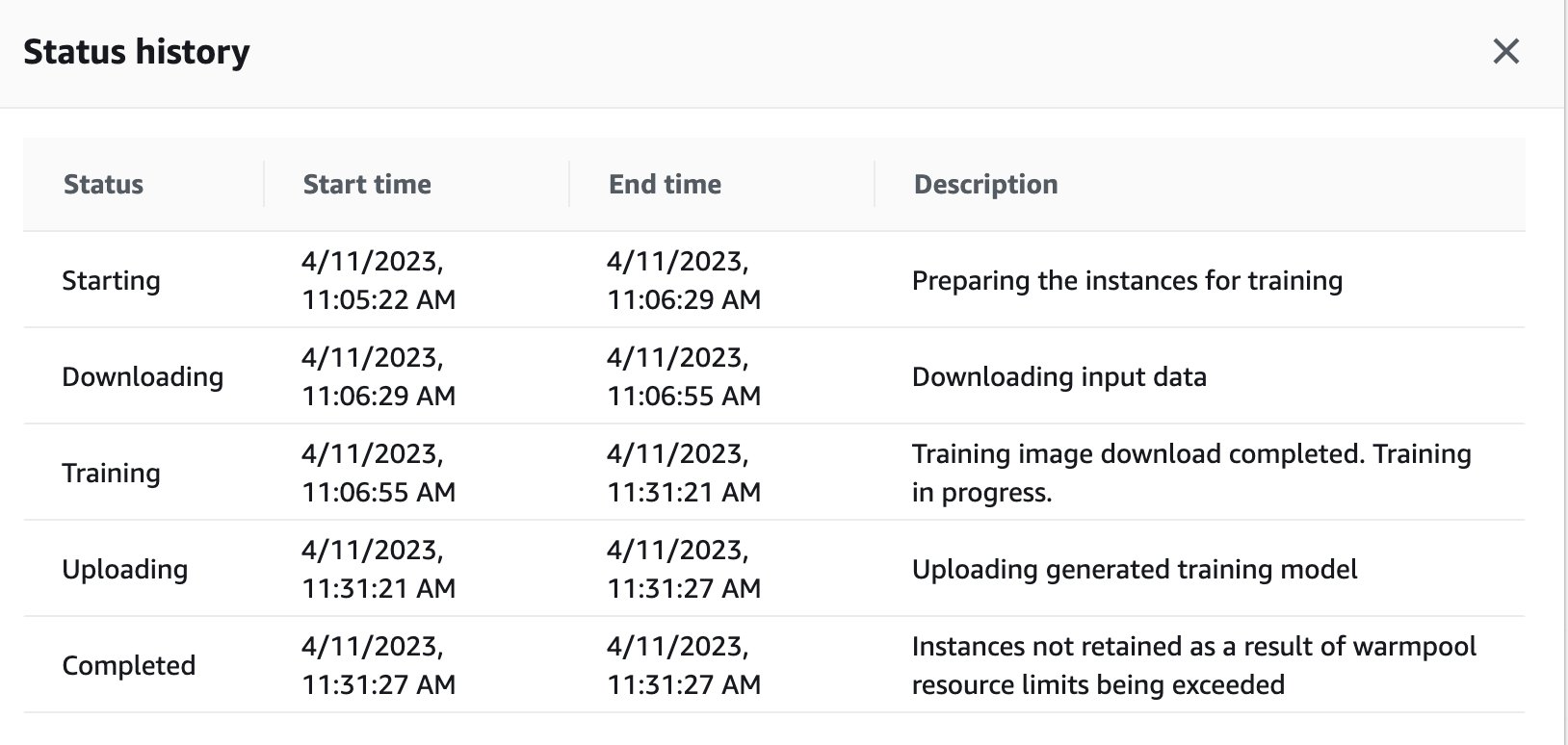

You can also view the training job status that got remotely triggered in the GPU instance on the SageMaker dashboard by navigating back to the SageMaker console.

As soon as the training job is complete, it continues to run the instructions in the notebook for evaluation and classification. Similar jobs can be trained and run via the remote executor function embedded within Studio notebooks to carry out the runs asynchronously.

Integration with SageMaker experiments inside a @remote function

You can pass your experiment name, run name, and other parameters into your remote function to create a SageMaker experiments run. The following code example imports the experiment name, the name of the run, and the parameters to log for each run:

from sagemaker.remote_function import remote

from sagemaker.experiments.run import Run

# Define your remote function

@remote

def train(value_1, value_2, exp_name, run_name):

...

...

#Creates the experiment

with Run(

experiment_name=exp_name,

run_name=run_name,

sagemaker_session=sagemaker_session

) as run:

...

...

#Define values for the parameters to log

run.log_parameter("param_1", value_1)

run.log_parameter("param_2", value_2)

...

...

#Define metrics to log

run.log_metric("metric_a", 0.5)

run.log_metric("metric_b", 0.1)

# Invoke your remote function

train(1.0, 2.0, "my-exp-name", "my-run-name") In the preceding example, the parameters p1 and p2 are logged over time inside a training loop. Common parameters may include batch size or epochs. In the example, the metrics A and B are logged for a run over time inside a training loop. Common metrics may include accuracy or loss. For more information, see Create an Amazon SageMaker Experiment.

Conclusion

In this post, we introduced a new SageMaker Python SDK capability that enables data scientists to run their ML code in their preferred IDE as SageMaker Training jobs. We discussed the prerequisites needed to use this capability along with its features. We also showed how to use this capability in Studio, SageMaker notebook instances, and your local IDE. In addition, we provided sample code examples to demonstrate how to use this capability. As a next step, we recommend trying this capability in your IDE or SageMaker by following the code examples referenced in this post.

About the Authors

Dipankar Patro is a Software Development Engineer at AWS SageMaker, innovating and building MLOps solutions to help customers adopt AI/ML solutions at scale. He has an MS in Computer Science and his areas of interest are Computer Security, Distributed Systems and AI/ML.

Dipankar Patro is a Software Development Engineer at AWS SageMaker, innovating and building MLOps solutions to help customers adopt AI/ML solutions at scale. He has an MS in Computer Science and his areas of interest are Computer Security, Distributed Systems and AI/ML.

Farooq Sabir is a Senior Artificial Intelligence and Machine Learning Specialist Solutions Architect at AWS. He holds PhD and MS degrees in Electrical Engineering from the University of Texas at Austin and an MS in Computer Science from Georgia Institute of Technology. He has over 15 years of work experience and also likes to teach and mentor college students. At AWS, he helps customers formulate and solve their business problems in data science, machine learning, computer vision, artificial intelligence, numerical optimization, and related domains. Based in Dallas, Texas, he and his family love to travel and go on long road trips.

Farooq Sabir is a Senior Artificial Intelligence and Machine Learning Specialist Solutions Architect at AWS. He holds PhD and MS degrees in Electrical Engineering from the University of Texas at Austin and an MS in Computer Science from Georgia Institute of Technology. He has over 15 years of work experience and also likes to teach and mentor college students. At AWS, he helps customers formulate and solve their business problems in data science, machine learning, computer vision, artificial intelligence, numerical optimization, and related domains. Based in Dallas, Texas, he and his family love to travel and go on long road trips.

Manoj Ravi is a Senior Product Manager for Amazon SageMaker. He is passionate about building next-gen AI products and works on software and tools to make large-scale machine learning easier for customers. He holds an MBA from Haas School of Business and a Masters in Information Systems Management from Carnegie Mellon University. In his spare time, Manoj enjoys playing tennis and pursuing landscape photography.

Manoj Ravi is a Senior Product Manager for Amazon SageMaker. He is passionate about building next-gen AI products and works on software and tools to make large-scale machine learning easier for customers. He holds an MBA from Haas School of Business and a Masters in Information Systems Management from Carnegie Mellon University. In his spare time, Manoj enjoys playing tennis and pursuing landscape photography.

Shikhar Kwatra is an AI/ML Specialist Solutions Architect at Amazon Web Services, working with a leading Global System Integrator. He has earned the title of one of the Youngest Indian Master Inventors with over 500 patents in the AI/ML and IoT domains. Shikhar aids in architecting, building, and maintaining cost-efficient, scalable cloud environments for the organization, and supports the GSI partner in building strategic industry solutions on AWS. Shikhar enjoys playing guitar, composing music, and practicing mindfulness in his spare time.

Shikhar Kwatra is an AI/ML Specialist Solutions Architect at Amazon Web Services, working with a leading Global System Integrator. He has earned the title of one of the Youngest Indian Master Inventors with over 500 patents in the AI/ML and IoT domains. Shikhar aids in architecting, building, and maintaining cost-efficient, scalable cloud environments for the organization, and supports the GSI partner in building strategic industry solutions on AWS. Shikhar enjoys playing guitar, composing music, and practicing mindfulness in his spare time.

Vikram Elango is a Sr. AI/ML Specialist Solutions Architect at AWS, based in Virginia, US. He is currently focused on generative AI, LLMs, prompt engineering, large model inference optimization, and scaling ML across enterprises. Vikram helps financial and insurance industry customers with design and thought leadership to build and deploy machine learning applications at scale. In his spare time, he enjoys traveling, hiking, cooking, and camping.

Vikram Elango is a Sr. AI/ML Specialist Solutions Architect at AWS, based in Virginia, US. He is currently focused on generative AI, LLMs, prompt engineering, large model inference optimization, and scaling ML across enterprises. Vikram helps financial and insurance industry customers with design and thought leadership to build and deploy machine learning applications at scale. In his spare time, he enjoys traveling, hiking, cooking, and camping.