The intricate hierarchical data structures in data warehouses and lakes sourced from diverse origins can make data modeling a protracted and error-prone process. To quickly adapt and create data models that meet evolving business requirements without having to rework them excessively, you need data models that are flexible, modular and adaptable enough to accommodate many requirements. This requires advanced technologies, proficient personnel, and robust methodologies.

The advancements in generative AI offer numerous opportunities to address these challenges. Multimodal large language models (LLMs) can analyze examples of data in the data lake, including text descriptions, code, and even images of existing databases. By understanding this data and its relationships, LLMs can suggest or even automatically generate schema layouts, simplifying the laborious process of implementing the data model within the database, so developers can focus on higher value data management tasks.

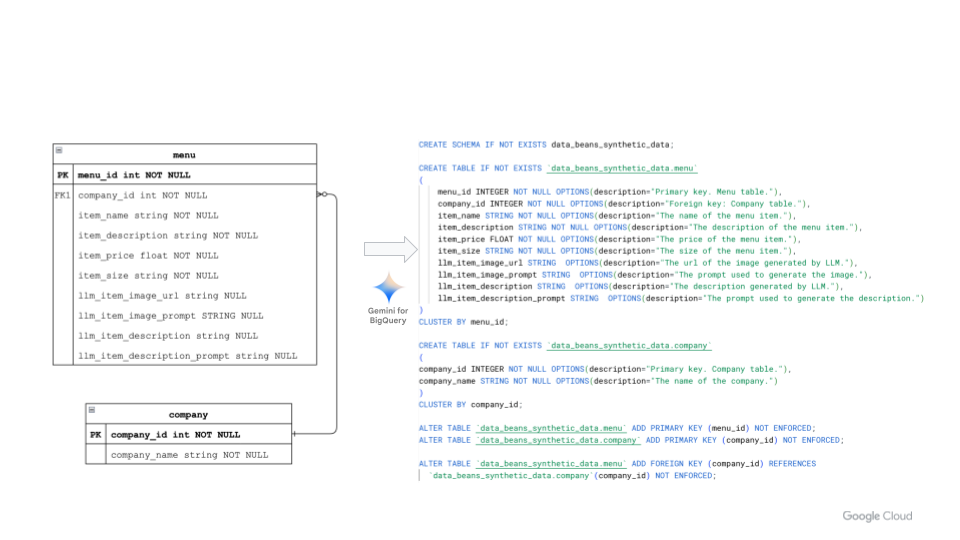

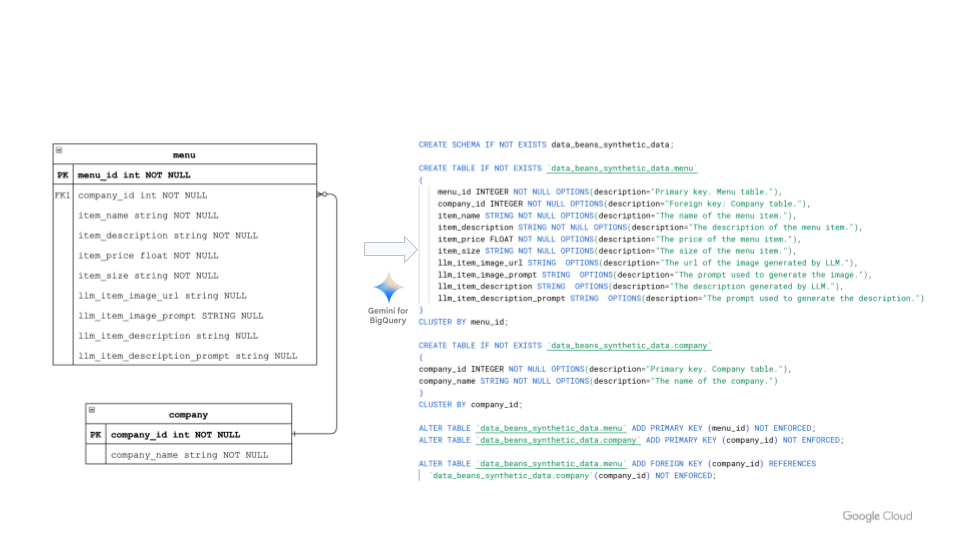

In this blog, we walk you through how to use multimodal LLMs in BigQuery to create a database schema. To do so, we’ll take a real-world example of entity relationship (ER) diagrams and examples of data definition languages (DDLs), and create a database schema in three steps.

For this demonstration, we will use Data Beans, a fictional technology company built on BigQuery that provides a SaaS platform to coffee sellers. Data Beans leverages BigQuery’s integration with Vertex AI to access Google AI models like Gemini Vision Pro 1.0 to analyze unstructured data and integrate it with structured data, while using BigQuery to help with data modeling and generating insights.

STEP1 : Create an entity relationship diagram

The first step is to create an ER diagram using your favorite modeling tool, or to take a screenshot of an existing ER diagram. The ER diagram can contain primary key and foreign key relationships, and will then be used as an input to the Gemini Vision Pro 1.0 model to create relevant BigQuery DDLs.

STEP2 : Create a prompt with the ER image as input

Next, to create the DDL statements in BigQuery, write a prompt to take an ER image as an input. The prompt should include detailed and relevant rules that the Gemini model should follow. In addition, make sure the prompt captures learnings from the previous iterations — in other words, be sure to update your prompt as you experiment and iterate it. These can be provided as examples to the model, for example a valid schema description for BigQuery. Providing a working example for the model to follow will help the model create a data definition DDL that follows your desired rules.

- code_block

- <ListValue: [StructValue([(‘code’, ‘## Prompt to guide the modelrnllm_erd_prompt=f”””Use BigQuery SQL commands to create the following:rn- Create a new BigQuery schema named “{dataset_id}”.rn- Use only BigQuery data types. Double and triple check this since it causes a lot of errors.rn- Create the BigQuery DDLs for the attached ERD.rn- Create primary keys for each table using the ALTER command. Use the “NOT ENFORCED” keyword.rn- Create foreign keys for each table using the ALTER command. Use the “NOT ENFORCED” keyword.rn- For each field add an OPTIONS for the description.rn- Cluster the table by the primary key.rn- For columns that can be null do not add “NULL” to the created SQL statement. BigQuery leaves this blank.rn- All ALTER TABLE statements should be at the bottom of the generated script.rn- The ALTER TABLE statements should be ordered by the primary key statements and then the foreign key statements. Order matters!rn- Double check your work especially that you used ONLY BigQuery data types.rnrnrnPrevious Errors that have been generated by this script. Be sure to check your work to avoid encountering these.rn- Query error: Type not found: FLOAT at [6:12]rn- Query error: Table test.company does not have Primary Key constraints at [25:1]rnrnrn## Example for model to influence fromrnExample:rnCREATE TABLE IF NOT EXISTS `{project_id}.{dataset_id}.customer`rn(rn customer_id INTEGER NOT NULL OPTIONS(description=”Primary key. Customer table.”),rn country_id INTEGER NOT NULL OPTIONS(description=”Foreign key: Country table.”),rn customer_llm_summary STRING NOT NULL OPTIONS(description=”LLM generated summary of customer data.”),rn customer_lifetime_value STRING NOT NULL OPTIONS(description=”Total sales for this customer.”),rn customer_cluster_id FLOAT NOT NULL OPTIONS(description=”Clustering algorithm id.”),rn customer_review_llm_summary STRING OPTIONS(description=”LLM summary are all of the customer reviews.”),rn customer_survey_llm_summary STRING OPTIONS(description=”LLM summary are all of the customer surveys.”)rn)rnCLUSTER BY customer_id;rnrnrnCREATE TABLE IF NOT EXISTS `{project_id}.{dataset_id}.country`rn(rncountry_id INTEGER NOT NULL OPTIONS(description=”Primary key. Country table.”),rncountry_name STRING NOT NULL OPTIONS(description=”The name of the country.”)rn)rnCLUSTER BY country_id;rnrnrnrnrnALTER TABLE `{project_id}.{dataset_id}.customer` ADD PRIMARY KEY (customer_id) NOT ENFORCED;rnALTER TABLE `{project_id}.{dataset_id}.country` ADD PRIMARY KEY (country_id) NOT ENFORCED;rnrnrnALTER TABLE `{project_id}.{dataset_id}.customer` ADD FOREIGN KEY (country_id) REFERENCES `{project_id}.{dataset_id}.country`(country_id) NOT ENFORCED;rn”””‘), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e05be1658b0>)])]>

Now you have an image of an ER diagram to present to your LLM.

STEP 3: Call the Gemini Pro 1.0 Vision model

After creating a prompt in Step 2, you are now ready to call the Gemini Pro 1.0 Vision model to generate the output by using the image of your ER diagram as an input (left side of Figure 1). You can do this in a number of ways — either directly from Colab notebooks using Python, or through BigQuery ML, leveraging its integration with Vertex AI:

- code_block

- <ListValue: [StructValue([(‘code’, ‘imageBase64 = convert_png_to_base64(menu_erd_filename)rnrnrnllm_response = GeminiProVisionLLM(llm_erd_prompt, imageBase64, temperature=.2, topP=1, topK=32)’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x3e05be165f40>)])]>

Conclusions and resources

In this demonstration, we saw how the multimodal Gemini model can streamline the creation of data and schemas. And while manually writing prompts is fine, it can be a daunting task when you need to do it at enterprise scale to create thousands of assets such as DDLs. Leveraging the above process, you can parameterize and automate prompt generation, dramatically speeding up the workflow and providing consistency across thousands of generated artifacts. You can find the complete Colab Enterprise notebook source code here.

BigQuery ML includes many new features to let you use Gemini Pro capabilities; for more, please see the documentation. Then, check out this tutorial to learn how to apply Google’s models to your data, deploy models, and operationalize ML workflows — all without ever moving your data from BigQuery. Finally, for a behind-the-scenes look on how we made this demo, watch this video on how to build an end-to-end data analytics and AI application using advanced models like Gemini directly from BigQuery.

Googlers Luis Velasco, Navjot Singh, Skander Larbi and Manoj Gunti contributed to this blog post. Many Googlers contributed to make these features a reality