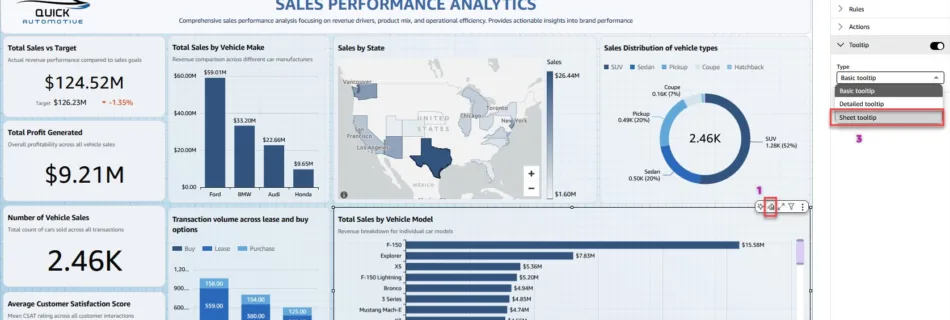

Create rich, custom tooltips in Amazon Quick Sight

Amazon Quick Sight, the business intelligence (BI) capability of Amazon Quick, is a unified BI service. It provides modern interactive dashboards, natural language querying, pixel-perfect reports, machine learning (ML) insights, and embedded analytics at scale. Amazon Quick brings together AI agents for business insights, research, and automation in one integrated experience, helping you work smarter …

Read more “Create rich, custom tooltips in Amazon Quick Sight”