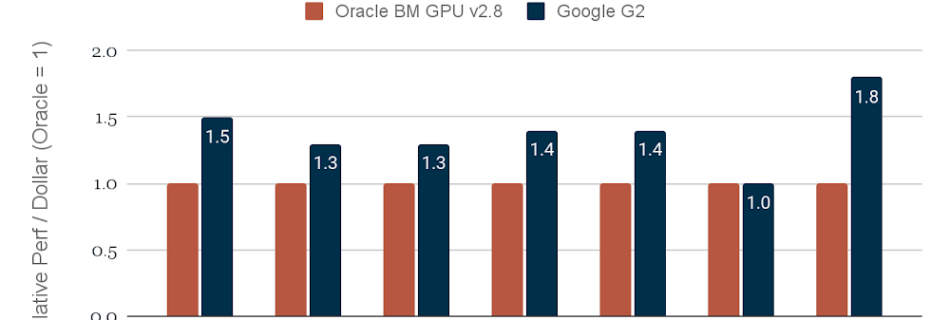

Helping you deliver high-performance, cost-efficient AI inference at scale with GPUs and TPUs

The pace of progress with AI model architectures is staggering, driven by breakthrough inventions such as Transformer, and by rapid growth in high-quality training data. In generative AI, for instance, large language models (LLMs) have been growing in size by as much as 10x per year. Organizations are deploying these AI models in their products …