Making AI and ML Operational: The Modeling Objective (Palantir RFx Blog Series, #7)

AI operating systems need to work in the real world, not just in a lab. The Modeling Objective is the critical link between a model’s creation and operational use.

Editor’s note: This is the seventh post in the Palantir RFx Blog Series, which explores how organizations can better craft RFIs and RFPs to evaluate digital transformation software. Each post focuses on one key capability area within a data ecosystem, with the goal of helping companies ask the right questions to better assess technology. Previous installments in this series have included posts on Ontology, Data Connection, Version Control, Interoperability, Operational Security, and Privacy-Enhancing Technologies.

Introduction

Palantir’s history with Artificial Intelligence & Machine Learning (AI/ML) began with our company’s founding in 2003. Today, Palantir is considered one of the top (or even the top) AI/ML company in the world. And yet, our approach to AI/ML computational techniques has not been one of massive adoption. Palantir’s approach to AI has been driven by realism. We are wary of hype-cycles and instead invest in technologies that we believe will actually produce operational results. In April 2023, Palantir CEO Alex Karp announced the coming fourth Palantir platform that will focus specifically on the integration of AI/ML — especially Large Language Models (LLMs) — with data analytics and operations.

In 2019, Palantir’s Chief Technology Officer Shyam Sankar wrote about how big data, peddled by many as “the new oil,” was more akin to snake oil given the massive and persistent gap between what vendors promised and what they actually delivered. Much of our realism about AI/ML technologies originates from a generalized skepticism that any single technology can provide the silver bullet solution to a given problem, even when that technology has truly transformative potential. The same can be said about many of the specific technologies that comprise a data ecosystem — entity extraction, predictive analytics, real-time data streaming, etc. AI technologies, as we discussed in a recent blog post, are certainly no exception.

Palantir is very strong in the AI/ML space specifically because of our healthy realism and our belief in the value of systems over specific technologies. We have spent our time and energy building the operating systems that enable AI/ML to be effectively deployed in the context of complex data environments with varied goals and missions (i.e., AI-OS). Palantir doesn’t focus on the building or selling of AI/ML models. We don’t focus on the building or selling AI/ML libraries. Rather, Palantir is focused on building an AI Operating System that allows an organization to actually use AI/ML models as part of an ecosystem of value creation and continual improvement. Importantly, this system-level approach also helps to better bring to life contextual considerations, including the ethics surrounding the use of the technology. In this way, our approach helps encourage a more responsible deployment of AI/ML, or what is often referred to as “Responsible AI.”

In this post, we introduce an important part of the AI-OS which is referred to by us (and others) as ML Ops — essentially those tools that are needed to operationalize Machine Learning and AI Models. We begin with a brief taxonomy of ML Ops and then dive more deeply into one of the oft-overlooked components of effective AI/ML: the Modeling Objective.

The taxonomy of ML Ops

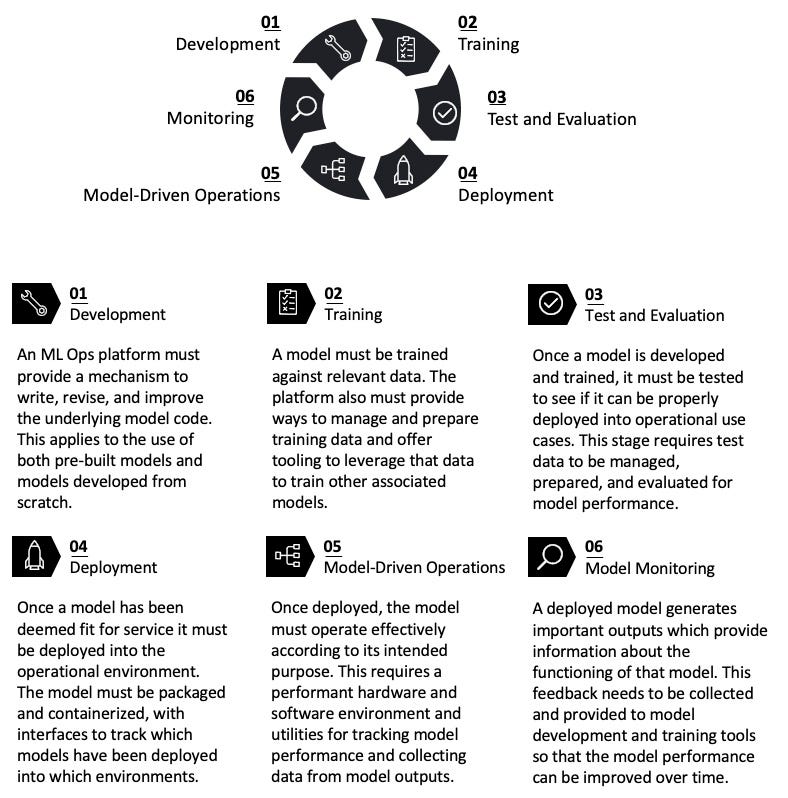

Covering everything that goes into ML Ops is too large a topic to explore in a single post but it is useful to define what we mean by ML Ops and the classes of capabilities it covers. ML Ops provides an AI-OS with the technological substrate for a cyclic approach to AI/ML, traversing the necessary steps for models to be developed, trained, tested, deployed, and monitored — this cycle itself is what is referred to as “ML Ops.”

In this post we focus on the often overlooked deployment of models, which is critical to translate research from the lab into operations in the real world. Model deployment requires that models can be treated as an input to a process that has an understanding of the different operational objectives that a model might be used for, and then moving those models into those operational environments in a usable form. We refer to this process of deploying an AI/ML model into the operational environment as the Modeling Objective.

What are Modeling Objectives?

Model is a broadly used term that, in the context of an AI-OS, is a computational algorithm that takes a series of inputs and produces a series of outputs. In this sense, a model can be thought of as a special class of data transformation. In AI/ML contexts, models often refer to algorithms intended to mimic, replace, or approximate some real-world process. Models can be simple or complex. They can be based on real-world physics or developed through training and parameterization. Artificial Intelligence & Machine Learning encompass a large range of relatively complex modeling techniques that usually attempt to replicate the perceptive or adaptive capacity of humans. Regardless of the complexity or simplicity of a model, it’s important to note that an AI/ML model does not contain its purpose within itself — this is where the concept of a modeling objective becomes necessary

Modeling Objectives provides the relevant and necessary context in which a model operates. It defines relevant data sources, metadata, and the specific business problem that the model is intended to address. Modeling Objectives are first-class concepts in data ecosystems and have defined interfaces, providing relevant details to end users. When a model is introduced into an existing modeling objective, it becomes part of a defined business purpose. The newly introduced model will now be associated with new purpose-specific metadata. Additionally, data and metadata from the model itself can be automatically extracted so that the modeling objective can effectively leverage and update or replace the model as newly improved iterations are developed.

For example, a metals supplier might have an interest in reducing the carbon footprint associated with their products. Metals start with raw ores that are extracted, smelted, transported to refineries, and finally delivered to their destination. Different combinations of mines, smelters, refineries, and transportation routes can be combined in many ways that will be dynamic through time. A modeling objective could be created with the goal of identifying a delivery path that leads to the lowest carbon impact. Models can be developed to address this problem and after training, testing, and tuning, the models can be registered with a modeling objective where they can then be leveraged as part of the metals delivery schedule.

Each modeling objective provides an interface that acts as a representation of the key variables in the operational environment where the model is to be deployed. The purpose of this digital deployment interface is to build a workflow for delivering models into production when they have been approved for release. The digital deployment interface is used to control which specific model and version is being used in a production environment while also providing tooling for understanding the health of the real-world deployment.

Just as modeling objectives may leverage a selection of models for a defined business purpose, a collection of modeling objectives can be aggregated into a model library that serves as a clearinghouse for modeling objectives. The model library offers an interface for users to track which models are leveraged by which objectives, which models have been deployed in production environments, and how recently a modeling objective has been updated. This library also provides a natural starting place for generating new modeling objectives, or deprecating modeling objectives that are no longer needed. By deploying models and tracking their usage through this single interface, users can to monitor model usage and ensure that the models are being effectively deployed.

These production workflows borrow from the best practices of Continuous Integration and Continuous Delivery (CI/CD) in software development. The main goal is to streamline the process around creating, changing, reviewing, and shipping models for operations. In order to facilitate these functions, deployments have built-in upgrade and health checks. These checks provide alerts for the status of the deployment itself (e.g., did a build or query complete as expected?) as well as the status of any model upgrade tasks.

Why is a Modeling Objective important?

The Modeling Objective is the critical link between a model’s creation and operational use. Without it, models are all too often relegated to labs, demonstrations, or siloed academic settings.

For example, consider a computer-vision model that helps identify the manufacturer of an automobile from an image of the vehicle. The model would be trained on a series of images of cars that have all been labeled with the correct manufacturer. Then, in a lab setting, the model could be exposed to test data of unlabeled images to see how well it works. To make this model operational, it needs to be deployed into an purpose-driven operational environment. The purpose of the model could be to identify vehicles that pass through a tollbooth. In this environment, there could be a server running the model based on images collected by a camera on the tollbooth with strong connectivity between the booth and the centralized infrastructure. Another purpose for the model could be to automate image recognition by a traffic control aircraft that is monitoring road conditions but has unreliable connectivity back to the ground. These dramatically different contexts are captured by the Modeling Objective that serves to place the correct constraints on the included models so that they generate outputs in accordance with the purposes for which the models have been deployed.

When the modeling objective is effectively implemented, it allows for collaborative work between model builders and model users while also making it easier to leverage models in a variety of settings. The modeling objective also clarifies how to improve models over time and identifies which models are the ones that have the most effective application. In this way, the modeling objective serves as a centralized interface in which the many contributors to the AI-OS can communicate with each other and achieve superior outcomes than they would in a more siloed infrastructure.

The modeling objective also provides a single interface for packaging and containerizing models so that they can be deployed into myriad environments without requiring an n:n mapping between models and deployment environments. The structured intermediary allows for the model development to be effectively isolated from the operational environment so they can each be agnostic to one-another.

Taken in total, the Modeling Objective is probably the most important and most often overlooked part of ML Ops.

Requirements

Below are some key requirements for organizations seeking to establish strong Modeling Objective capabilities within a broader AI/ML operating system.

The solution must allow users to establish Modeling Objectives. The objective is a text (markdown) field describing the organization application of the objective and how it is intended to function. This allows for a clear statement of intent and purpose, allowing for greater transparency, justification, and use case-specific evaluation.

Modeling Objectives must include API inputs and outputs. This is a defined list of required input and output fields that can be upgraded as breaking changes are released and is used to directly inform downstream applications of how this modeling objective can be consumed.

Modeling Objectives must be agnostic to the model implementation. Models can come from many sources and take on many different forms, and the state of the art can change very quickly. The Modeling Objective should have an interface for packaging and delivering any model into an operational environment to provide maximum flexibility for data scientists, while maintaining a consistent ML Ops toolkit.

The Modeling Objective must systematically evaluate models. Systematic model evaluation is critical to ensure that organizations are consistently and fairly evaluating models against a set of organizational standards. Systematic model evaluation should automatically track the testing data, version of the testing data, model version, and evaluation logic to ensure like-for-like comparison of model performance. These metrics should be consumable inside the modeling objective and based off of configured input datasets and evaluation pipelines. Key metrics should be configurable and able to be pinned to highlight important information.

The Modeling Objective must provide an interface for reviews. Reviews are part of a governance workflow where subject matter experts can provide feedback and approvals on models that are tracked and assigned. Deployments should be configurable to require specified individuals or members from specified groups approve models before they are released into production settings. This ensures models are correctly vetted by all stakeholders before production use. See this page for information about how multi-stakeholder checks and reviews are enabled in Foundry.

The Modeling Objective must include an interface to track and manage models through the AI/ML lifecycle. A modeling objective should track all candidate models as they progress through the ML lifecycle — from development to deployment. Modeling Objectives should track all models that are used in production so that individual inferences are auditable to a specific model, evaluation, and approval of that model.

Conclusion

Any organization seeking to build a robust ML Ops capability requires a modeling objective. That’s why our newly announced Artificial Intelligence Platform recognizes the critical requirement within ML Ops: the ability to take an AI/ML model from development to operational context. The modeling objective interface enables organizations to define a model’s purpose and evaluate that model within the cyclical process of build > train > deploy > evaluate > build. Palantir AIP will optimize an AI-OS as part of the complex operational and decision-making workflows that current and future customers require.

For more information about how we deploy these concepts with government and commercial organizations, please see:

Making AI and ML Operational: The Modeling Objective was originally published in Palantir Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.