Sparked by the release of large AI models like AlexaTM, GPT, OpenChatKit, BLOOM, GPT-J, GPT-NeoX, FLAN-T5, OPT, Stable Diffusion, and ControlNet, the popularity of generative AI has seen a recent boom. Businesses are beginning to evaluate new cutting-edge applications of the technology in text, image, audio, and video generation that have the potential to revolutionize the services they provide and the ways they interact with customers. However, as the size and complexity of the deep learning models that power generative AI continue to grow, deployment can be a challenging task. Advanced techniques such as model parallelism and quantization become necessary to achieve latency and throughput requirements. Without expertise in using these techniques, many customers struggle to get started with hosting large models for generative AI applications.

This post can help! We begin by discussing different types of model optimizations that can be used to boost performance before you deploy your model. Then, we highlight how Amazon SageMaker large model inference deep learning containers (LMI DLCs) can help with optimization and deployment. Finally, we include code examples using LMI DLCs and FasterTransformer model parallelism to deploy models like flan-t5-xxl and flan-ul2. You can find an accompanying example notebook in the SageMaker examples repository.

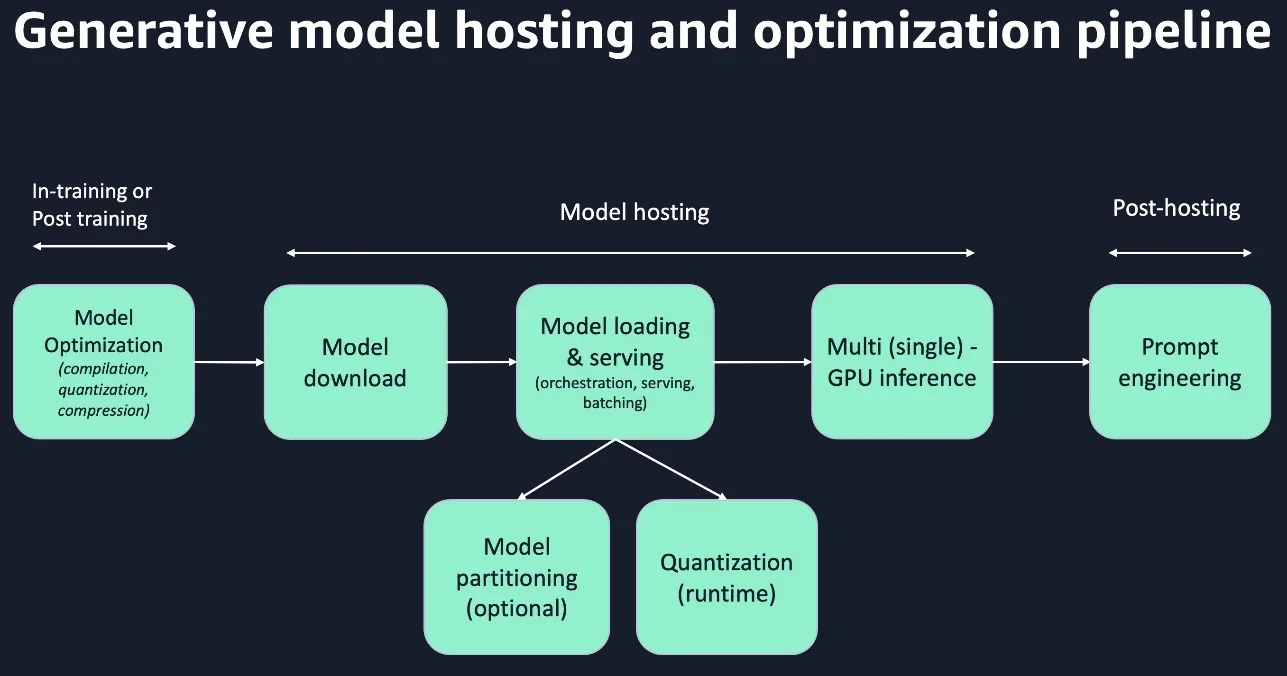

Large model deployment pipeline

Major steps in any model inference workflow include loading a model into memory and handling inference requests on this in-memory model through a model server. Large models complicate this process because loading a 350 GB model such as BLOOM-176B can take tens of minutes, which materially impacts endpoint startup time. Furthermore, because these models can’t fit within the memory of a single accelerator, the model must be organized and partitioned such that it can be spread across the memory of multiple accelerators; then, model servers must handle processes and communication across multiple accelerators. Beyond model loading, partitioning, and serving, compression techniques are increasingly necessary to achieve performance goals (such as subsecond latency) for customers working with large models. Quantization and compression can reduce model size and serving cost by reducing the precision of weights or reducing the number of parameters via pruning or distillation. Compilation can optimize the computation graph and fuse operators to reduce memory and compute requirements of a model. Achieving low latency for large language models (LLMs) requires improvements in all the steps in the inference workflow: compilation, model loading, compression (runtime quantization), partitioning (tensor or pipeline parallelism), and model serving. At a high level, partitioning (with kernel optimization) brings down inference latency up to 66% (for example, BLOOM-176B from 30 seconds to 10 seconds), compilation by 20%, and compression by 50% (fp32 to fp16). An example pipeline for large model hosting with runtime partitioning is illustrated in the following diagram.

Overview of large model inference optimization techniques

With the large model deployment pipeline in mind, we now explore the optimizations. Optimizations can be critical to achieve latency and throughput goals. However, you need to be thoughtful about which optimizations you use and to what degree, because the accuracy of your model can be affected.

The following diagram is a high-level overview of different inference optimization techniques. Optimization approaches can be at the hardware or software level. We focus only on software optimization techniques in this post.

Optimized kernels and compilation

Today, optimized kernels are the greatest source of performance improvement for LMI (for example, DeepSpeed’ kernels reduced BLOOM-176B latency by three times). Fused kernel operators are model specific, and different model parallel libraries have different approaches. DeepSpeed created an inject policy for each model family. DeepSpeed has handwritten PyTorch modules and CUDA kernels that could speed up the model partially. Meanwhile, FasterTransformer rewrites the model in pure C++ and CUDA to speed up model as a whole. PyTorch 2.0 offers an open portal (via torch.compile) to allow easy compilation into different platforms. To bring cost/performance-wise optimization on SageMaker for LLMs, we offer SageMaker LMI containers that provide the best open-source compilation stack offering on a model basis, like T5 with FasterTransformers and GPT-J with DeepSpeed.

Compilation or integration to optimized runtime

ML compilers, such as Amazon SageMaker Neo, apply techniques such as operator fusion, memory planning, graph optimizations, and automatic integration to optimized inference libraries. Because inference includes only a forward propagation, intermediate tensors between layers are discarded instead of stored for reuse in back-propagation. The graph optimization techniques improve the inference throughput and have a small impact on model memory footprints. Relative to other optimization techniques, compilation for inference provides a limited benefit for reducing a model’s memory requirements. Several runtime libraries for GPU are available today, such as FasterTransformer, TensorRT, and ONNX Runtime.

Model compression

Model compression is a collection of approaches that researchers and practitioners can use to reduce the size of their model, realize faster speed, and reduce hosting cost. Model compression techniques primarily include knowledge distillation, pruning, and quantization. Most compression technologies are challenging for LLMs due to requiring additional training cycles to improve the accuracy of compressed models.

Quantization

Quantization is the process of mapping values from a larger or continuous set of numbers to a smaller set of numbers (for example, INT8 {-128:127}, uINT8 {0:255}). Using a smaller set of numbers reduces memory use and complexity of computations, but the decreased precision can degrade the accuracy of the model. The level of quantization can be adjusted to fit size constraints and accuracy needs. For example, a model quantized to FP8 will be about half the size of a model in FP16 but at the expense of reduced accuracy.

Quantization has shown great and consistent success for inference tasks by reducing the size of the model up to 75%, offering 2–4 times throughput improvements and cost savings.

The success of quantization is because it’s broadly applicable across a range of models and use cases with approximately 1% accuracy/score loss, if a proper technique is used. It doesn’t require changing model architecture. Typically, it starts with an existing floating-point model and quantizes it to obtain a fixed-point quantized model. Quantizing from FP32 to INT8 reduces the model size by 75%, but the accuracy/score loss impact is often less than a point.

Distillation

With distillation, a larger teacher model transfers knowledge to a smaller student model. The model size can be reduced until the student model can fit on an edge device or smaller cloud-based hardware, but accuracy decreases as the model is reduced. There is no industry standard for distillation, and many techniques are experimental. Distillation requires more work by the customer in tuning and trial and error to shrink the model without affecting accuracy. For more information, refer to Knowledge distillation in deep learning and its applications.

Pruning

Pruning is a model compression technique that reduces the number of operations by removing parameters. To minimize the impact to model accuracy, parameters are first ranked by importance. Parameters that are less important are set to zero or connections to the neuron are removed. This decreases the number of operations with minimal impact to model accuracy. For example, when using a pre-trained model for a narrow use case, parts of the larger model that are less relevant to your application could be pruned away to reduce size without significantly degrading performance for your task.

Model partitioning

A model that can’t fit on a single accelerator’s memory must be split into multiple partitions. At a high level, there are two fundamental approaches to partitioning the model (model parallelism): tensor parallelism and pipeline parallelism.

Tensor parallelism is also called intra-layer model parallelism. In this approach, each one of the layers is partitioned across the workers (accelerators). On the positive side, we can handle models with very large layers, because the layers are split across workers. Therefore, we no longer need to fit at least a single layer on a worker, as was the case for pipeline parallelism. However, this leads to an all-to-all communication pattern between the workers after each one of the layers, so there’s a heavy burden on the GPU/accelerator interconnect.

Pipeline parallelism partitions the model into layers. Each worker may end up with having one or more layers. This approach uses point-to-point communication and therefore introduces lower communication overhead compared to tensor parallelism. However, this approach won’t be useful if a layer can’t fit into a single worker’s or accelerator’s memory. This approach is also prone to pipeline idleness and may reduce the scaling efficiency.

Open-source frameworks like DeepSpeed, Hugging Face Accelerate, and FasterTransformer allow per model-based optimization to shard the model. Especially for DeepSpeed, the partitioning algorithm is tightly coupled with fused kernel operators. SageMaker LMI containers come with pre-integrated model partitioning frameworks like FasterTransformer, DeepSpeed, HuggingFace, and Transformers-NeuronX,. Currently, DeepSpeed, FasterTransformer, and Hugging Face Accelerate shard the model at model loading time. Runtime model partitioning can take more than 10 minutes (OPT-66B) and consume extensive CPU, GPU, and accelerator memory. Ahead-of-time (AOT) partitioning can help reduce model loading times. With AOT, models are partitioned before deployment and partitions are kept ready for downstream optimization and subsequent ingestion by model parallel frameworks. When model parallel frameworks are fed already partitioned models, then runtime partition doesn’t happen. This improves model loading time and reduces CPU, GPU, and accelerator memory consumption. DeepSpeed and FasterTransformer have support for pre-partitioning and saving for models.

Prompt engineering

Prompt engineering refers to efforts to extract accurate, consistent, and fair outputs from large models, such text-to-image synthesizers or large language models. LLMs are trained on large-scale bodies of text, so they encode a great deal of factual information about the world. A prompt consists of text and optionally an image given to a pre-trained model for a prediction task. A prompt text may consist of additional components like context, task (instruction, question, and so on), image or text, and training samples. Prompt engineering also provides a way for LLMs to do few-shot generalization, in which a machine learning model trained on a set of generic tasks learns a new or related task from just a handful of examples. For more information, refer to EMNLP: Prompt engineering is the new feature engineering. Refer to the following GitHub repo for more information about getting the most out of your large models using prompt engineering on SageMaker.

Model downloading and loading

Large language models incur long download times (for example, 40 minutes to download BLOOM-176B). In 2022, SageMaker Hosting added the support for larger Amazon Elastic Block Store (Amazon EBS) volumes up to 500 GB, longer download timeout up to 60 minutes, and longer container startup time of 60 minutes. You can enable this configuration to deploy LLMs on SageMaker. SageMaker LMI containers includes model download optimization by using the s5cmd library to speed up the model download time and container startup times, and eventually speed up auto scaling on SageMaker.

Diving deep into SageMaker LMI containers

SageMaker maintains large model inference containers with popular open-source libraries for hosting large models such as GPT, T5, OPT, BLOOM, and Stable Diffusion on AWS infrastructure. With these containers, you can use corresponding open-source libraries such as DeepSpeed, Accelerate, FasterTransformer, and Transformers-NeuronX to partition model parameters using model parallelism techniques to use the memory of multiple GPUs or accelerators for inference. Transformers-NeuronX is a model parallel library introduced by the AWS Neuron team for AWS Inferentia and AWS Trainium to support LLMs. It supports tensor parallelism across Neuron cores.

The LMI container uses DJLServing as the pre-built integrated model server; pre-built integrated model partitioning frameworks like DeepSpeed, Accelerate, FasterTransformer, and Transformers-NeuronX; support for PyTorch; and comes with pre-installed cuDNN, cuBLAS, NCCL CUDA Toolkit for GPUs, MKL for CPU, and the Neuron SDK and runtime for running models on AWS Inferentia and Trainium.

Pre-integrated model partitioning frameworks in SageMaker LMI containers

SageMaker LMI comes with pre-integrated model partitioning frameworks to suite your performance and model support requirements.

Most of the model parallel frameworks support both pipeline and tensor parallelism. Pipeline parallelism is simpler implementation compared to tensor parallelism. However, due to its sequential operating nature, it’s slower than tensor parallelism. Pipeline parallelism and tensor parallelism can be combined together.

Transformers-NeuronX is a model parallel library introduced by the Neuron team to support LLMs on AWS Inferentia and Trainium. It supports tensor parallelism across Neuron cores. The following table summarizes different model partitioning frameworks. This will help you select the right framework for deploying your models on SageMaker.

| Hugging Face Accelerate | DeepSpeed | FasterTransformer | TransformersNeuronX (Inf2/Trn1) | |

| Model Parallel | Pipeline Parallelism | Pipeline and Tensor Parallelism | Pipeline and Tensor Parallelism | Tensor Parallelism |

| Load Hugging Face checkpoints | ✓ | ✓ | ✓ | ✓ |

| Runtime partition | . | ✓ | ✓ | ✓ |

| Ahead-of-time partition | . | ✓ | ✓ | . |

| Model partitioning on CPU memory | . | . | ✓ | . |

| Supported models | All Hugging Face models | All GPT family, Stable Diffusion, and T5 family | GPT2/OPT/BLOOM/T5 | GPT2/OPT/GPTJ/GPT-NeoX* |

| Streaming tokens | ✓ | ✓ | . | ✓ |

| Fast model loading | ✓ | ✓ | ✓ | . |

| Model loading speed | Medium | Fast | Fast | . |

| Performance on model types | All other non-optimized models | GPT family | T5 and BLOOM | All supported models |

| Hardware support | CPU/GPU | GPU | GPU | Inf2/Trn1 |

| SM MME support | ✓ | ✓ | ✓ | . |

Large model deployment pipeline on SageMaker

SageMaker LMI containers offer a low-code/no-code mechanism to set up your large model deployment pipeline with the following capabilities:

- Faster model download time using s5cmd

- Pre-built optimized model parallel frameworks including Transformers-NeuronX, DeepSpeed, Hugging Face Accelerate, and FasterTransformer

- Pre-built foundation software stack including PyTorch, NCCL, and MPI

- Low-code/no-code deployment of large models by configuring serving.properties

- SageMaker-compatible containers

The following diagram gives an overview of a SageMaker LMI deployment pipeline you can use to deploy your models.

Deploy a FLAN-T5-XXL model on SageMaker using the newly released LMI container version

FasterTransformer is a library implementing an accelerated engine for the inference of transformer-based neural networks, with a special emphasis on large models, spanning many GPUs and nodes in a distributed manner. FasterTransformer contains the implementation of the highly optimized version of the transformer block that contains the encoder and decoder parts. With this block, you can run the inference of both the full encoder-decoder architectures like T5, as well as encoder-only models such as BERT, or decoder-only models such as GPT. It’s written in C++/CUDA and relies on the highly optimized cuBLAS, cuBLASLt, and cuSPARSELt libraries. This allows you to build the fastest transformer inference pipeline on GPU.

The FasterTransformer model parallel library is now available in a SageMaker LMI container, adding support for popular models such as flan-t5-xxl and flan-ul2. FasterTransformer is an open-source library from NVIDIA that provides an accelerated engine for efficiently running transformer-based neural network inference. It has been designed to handle large models that require multiple GPUs or accelerators and nodes in a distributed manner. The library includes an optimized version of the transformer block, which comprises both the encoder and decoder parts, enabling you to run the inference of full encoder-decoder architectures like T5, as well as encoder-only models like BERT and decoder-only models like GPT.

Runtime architecture of hosting a model using an LMI container’s FasterTransformer engine on SageMaker

The FasterTransformer engine in an LMI container supports loading model weights from an Amazon Simple Storage Service (Amazon S3) path or Hugging Face Hub. After fetching the model, it converts the Hugging Face model checkpoint to FasterTransformer supported partitioned model artifacts based on input parameters like tensor parallel degree and loads the partitioned model artifacts across GPU devices. It has faster loading and uses multi-process loading on Python. It supports AOT compilation and uses CPU to partition the model. SageMaker LMI containers improve the performance in downloading the models from Amazon S3 using s5cmd, provide the FasterTransformer engine, which provides a layer of abstraction for developers that loads the model in Hugging Face checkpoint or PyTorch bin format, and uses the FasterTransformer library to convert it into FasterTransformer-compatible format. These steps happen during the container startup and load the model in the memory before the inference requests come in. The FasterTransformer engine provides high performance C++ and CUDA implementations for the models to run inference. This helps improve the container startup time and reduce the inference latency. The following diagram illustrates the runtime architecture of serving models using FasterTransformer on SageMaker. For more information about DJLServing’s runtime architecture, refer to Deploy large models on Amazon SageMaker using DJLServing and DeepSpeed model parallel inference.

Use SageMaker LMI container images

To use a SageMaker LMI container to host a FLAN-T5 model, we have no-code option or a bring-your-own-script option. We showcase the bring-your-own-script option in this post. The first step in the process is to use the right LMI container image. An example notebook is available in the GitHub repo.

Use the following code to use the SageMaker LMI container image after replacing the Region with the specific Region you’re running the notebook in:

Download the model weights

An LMI container allows us to download the model weights from the Hugging Face Hub at run time when spinning up the instance for deployment. However, that takes longer because it’s dependent on the network and on the provider. The faster option is to download the model weights into Amazon S3 and then use the LMI container to download them to the container from Amazon S3. This is also a preferred method when we need to scale up our instances. In this post, we showcase how to download the weights to Amazon S3 and then use them when configuring the container. See the following code:

Create the model configuration and inference script

First, we create a file called serving.properties that configure the container. This tells the DJL model server to use the FasterTransformer engine to load and shard the model weights. Secondly, we point to the S3 URI where the model weights have been installed. The LMI container will download the model artifacts from Amazon S3 using s5cmd. The file contains the following code:

For the no-code option, the key changes are to specify the entry_point as the built-in handler. We specify the value as djl_python.fastertransformer. For more details, refer to the GitHub repo. You can use this code to modify for your own use case as needed. A complete example that illustrates the no-code option can be found in the following notebook. The serving.properties file will now look like the following code:

Next, we create our model.py file, which defines the code needed to load and then serve the model. The only mandatory method is handle(inputs). We continue to use the functional programing paradigm to build the other helpful methods like load_model(), pipeline_generate(), and more. In our code, we read in the tensor_parallel_degree property value (the default value is 1). This sets the number of devices over which the tensor parallel modules are distributed. Secondly, we get the model weights downloaded under the /tmp location on the container and referenceable by the environment variable “model_dir”. To load the model, we use the FasterTransformer init method as shown in the following code. Note we load the full precision weights in FP32. You can also quantize the model at runtime by setting dtype = "fp16" in the following code and setting tensor_parallel_degree = 2 in serving.properties. However, note that the FP16 version of this model may not provide similar performance in terms of output quality as compared to FP32 version. In addition, refer to an existing issue related to impact on the model quality on FasterTransformer for the T5 model for certain NLP tasks.

Create a SageMaker endpoint for inference

In this section, we go through the steps to create a SageMaker model and endpoint for inference.

Create a SageMaker model

We now create a SageMaker model. We use the Amazon Elastic Container Registry (Amazon ECR) image provided by and the model artifact from the previous step to create the SageMaker model. In the model setup, we configure tensor_parallel_degree to 4 in serving.properties, which means the model is partitioned along 4 GPUs. See the following code:

Create a SageMaker endpoint for inference

You can use any instances with multiple GPUs for testing. In this demo, we use a g5.12xlarge instance. In the following code, note how we set ModelDataDownloadTimeoutInSeconds and ContainerStartupHealthCheckTimeoutInSeconds. We don’t set the VolumeSizeInGB parameters because this instance comes with SSD. The VolumeSizeInGB parameter is applicable to GPU instances supporting the EBS volume attachment.

Lastly, we create a SageMaker endpoint:

Starting the endpoint might take a few minutes. You can try a few more times if you run into the InsufficientInstanceCapacity error, or you can raise a request to AWS to increase the limit in your account.

Invoke the model

This is a generative model, so we pass in a text as a prompt and model will complete the sentence and return the results.

You can pass a batch of prompts as input to the model. This done by setting inputs to the list of prompts. The model then returns a result for each prompt. The text generation can be configured using appropriate parameters.

Model parameters at inference time

The following code lists the set of default parameters that is used by the model. You can set these arguments to a specific value of your choice while invoking the endpoint.

The following code has a sample invocation to the endpoint we deployed. We use the max_seq_len parameter to control the number of tokens that are generated and temperature to control the randomness of the generated text.

Clean up

When you’re done testing the model, delete the endpoint to save costs if the endpoint is no longer required:

Performance tuning

If you intend to use this post and accompanying notebook with a different model, you may want to explore some of the tunable parameters that SageMaker, DeepSpeed, and the DJL offer. Iteratively experimenting with these parameters can have a material impact on the latency, throughput, and cost of your hosted large model. To learn more about tuning parameters such as number of workers, degree of tensor parallelism, job queue size, and others, refer to DJLServing configurations and Deploy large models on Amazon SageMaker using DJLServing and DeepSpeed model parallel inference.

Benchmarking results on hosting FLAN-T5 model on SageMaker

The following table summarizes our benchmarking results.

| Model | Model Partitioning and Optimization Engine | Quantization | Batch Size | Tensor Parallel Degree | Number of Workers | Inference Latency P50 (ms) | Inference Latency P90 (ms) | Inference Latency P99 (ms) | Data Quality |

| flan-t5-xxl | FasterTransformer | FP32 | 4 | 4 | 1 | 327.39 | 331.01 | 612.73 | Normal |

For our benchmark, we used four different type of tasks that form into a single batch and benchmarked Flan-T5-XXL model. FasterTransformer is using a tensor parallel degree of 4 (the model gets partitioned across four accelerator devices on the same host). From our benchmark observation, FasterTransformer was the most performant in terms of latency and throughput as compared to other frameworks for hosting this model. The p99 inference latency was 612 milliseconds.

Conclusion

In this post, we gave an overview of large model hosting challenges, and how SageMaker LMI containers help you address these challenges using its low-code/no-code capabilities. We showcased how to host large models using FasterTransformer with high performance on SageMaker using the SageMaker LMI container. We demonstrated this new capability in an example of deploying a FLAN-T5-XXL model on SageMaker. We also covered options available to tune the performance of your models using different model optimization approaches and how SageMaker LMI containers offer low-code/no-code options to you in hosting and optimizing the large models.

About the authors

Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing, and Artificial Intelligence. He focuses on Deep learning including NLP and Computer Vision domains. He helps customers achieve high performance model inference on SageMaker.

Dhawal Patel is a Principal Machine Learning Architect at AWS. He has worked with organizations ranging from large enterprises to mid-sized startups on problems related to distributed computing, and Artificial Intelligence. He focuses on Deep learning including NLP and Computer Vision domains. He helps customers achieve high performance model inference on SageMaker.

Rohith Nallamaddi is a Software Development Engineer at AWS. He works on optimizing deep learning workloads on GPUs, building high performance ML inference and serving solutions. Prior to this, he worked on building microservices based on AWS for Amazon F3 business. Outside of work he enjoys playing and watching sports.

Rohith Nallamaddi is a Software Development Engineer at AWS. He works on optimizing deep learning workloads on GPUs, building high performance ML inference and serving solutions. Prior to this, he worked on building microservices based on AWS for Amazon F3 business. Outside of work he enjoys playing and watching sports.

Robert Van Dusen is a Senior Product Manager with Amazon SageMaker. He leads deep learning model optimization for applications such as large model inference.

Robert Van Dusen is a Senior Product Manager with Amazon SageMaker. He leads deep learning model optimization for applications such as large model inference.

Rupinder Grewal is a Sr Ai/ML Specialist Solutions Architect with AWS. He currently focuses on serving of models and MLOps on SageMaker. Prior to this role he has worked as Machine Learning Engineer building and hosting models. Outside of work he enjoys playing tennis and biking on mountain trails.

Rupinder Grewal is a Sr Ai/ML Specialist Solutions Architect with AWS. He currently focuses on serving of models and MLOps on SageMaker. Prior to this role he has worked as Machine Learning Engineer building and hosting models. Outside of work he enjoys playing tennis and biking on mountain trails.

Pinak Panigrahi works with customers to build machine learning driven solutions to solve strategic business problems on AWS. When not occupied with machine learning, he can be found taking a hike, reading a book or catching up with sports.

Pinak Panigrahi works with customers to build machine learning driven solutions to solve strategic business problems on AWS. When not occupied with machine learning, he can be found taking a hike, reading a book or catching up with sports.

Qing Lan is a Software Development Engineer in AWS. He has been working on several challenging products in Amazon, including high performance ML inference solutions and high performance logging system. Qing’s team successfully launched the first Billion-parameter model in Amazon Advertising with very low latency required. Qing has in-depth knowledge on the infrastructure optimization and Deep Learning acceleration.

Qing Lan is a Software Development Engineer in AWS. He has been working on several challenging products in Amazon, including high performance ML inference solutions and high performance logging system. Qing’s team successfully launched the first Billion-parameter model in Amazon Advertising with very low latency required. Qing has in-depth knowledge on the infrastructure optimization and Deep Learning acceleration.